Apache HBase is a massively scalable, distributed huge knowledge retailer within the Apache Hadoop ecosystem. We are able to use Amazon EMR with HBase on prime of Amazon Easy Storage Service (Amazon S3) for random, strictly constant real-time entry for tables with Apache Kylin. It ingests knowledge by means of spark jobs and queries the HTables by means of Apache Kylin cubes. The HBase cluster makes use of HBase write-ahead logs (WAL) as a substitute of Amazon EMR WAL.

A time goes by, corporations might wish to scale in long-running Amazon EMR HBase clusters due to points equivalent to Amazon Elastic Compute Cloud (Amazon EC2) scheduling occasions and price range considerations. One other subject is that corporations might use Spot Situations and auto scaling for job nodes for short-term parallel computation energy, like MapReduce duties and spark executors. Amazon EMR additionally runs HBase area servers on job nodes for Amazon EMR on S3 clusters. Spot interruptions will result in an sudden shutdown on HBase area servers. For an Amazon EMR HBase cluster with out enabling write-ahead logs (WAL) for Amazon EMR function, an sudden shutdown on HBase area servers will trigger WAL splits with server restoration course of, and it’ll deliver further load to the cluster and typically makes HTables inconsistent.

For these causes, directors search for a solution to scale-in Amazon EMR HBase cluster gracefully and cease all HBase area servers on the duty nodes.

This submit demonstrates methods to gracefully decommission goal area servers programmatically. The scripts do the next duties. The script additionally exams efficiently in Amazon EMR 7.3.0, Amazon EMR 6.15.0, and 5.36.2.

Robotically transfer the HRegions by means of a script

Increase the decommission precedence

Decommission HBase area servers gracefully

Stop Amazon EMR provisioning area servers on job nodes by Amazon EMR software program configurations

Stop Amazon EMR provisioning area servers on job nodes by Amazon EMR steps

Overview of resolution

For swish scaling in, the script makes use of HBase built-in graceful_stop.sh to maneuver areas to different area servers to keep away from WAL splits when decommissioning nodes. The script makes use of HDFS CLI and net interface to ensure there aren’t any lacking and corrupted HDFS block in the course of the scaling occasions. To forestall Amazon EMR provisions HBase area servers on job nodes, directors must specify software program configurations per occasion teams when launching a cluster. For present clusters, directors can both use a step to terminate HBase area servers on job nodes, or reconfigure the duty occasion group’s HBase storagerootdir.

Resolution

For a working Amazon EMR cluster, directors can use AWS Command Line Interface (AWS CLI) to subject a modify-instance-groups with EC2InstanceIdsToTerminate to terminate specified cases instantly. However terminating an occasion on this approach could cause a knowledge loss and unpredictable cluster habits when HDFS blocks haven’t sufficient copies or there are ongoing duties on these decommissioned nodes. To keep away from these dangers, directors can ship a modify-instance-groups with a brand new occasion request depend and not using a particular occasion ID that directors wish to terminate. This command triggers a swish decommission course of on the Amazon EMR aspect. Nonetheless, Amazon EMR solely helps swish decommission for YARN and HDFS. Amazon EMR doesn’t assist swish decommission for HBase.

Therefore, directors can strive methodology 1, as described later on this submit, to lift the decommission precedence of the decommission targets as step one. In case tweaking the decommissions precedence didn’t work, transfer ahead to the second strategy, methodology 2. Methodology 2 is to cease the resizing request, and transfer the HRegions manually earlier than terminating the goal core nodes. Be aware that Amazon EMR is a managed service. Amazon EMR service will terminate the EC2 occasion after anybody stops it or detach its Amazon Elastic Block Retailer (Amazon EBS) volumes. Subsequently, don’t attempt to detach EBS volumes on the decommission targets and connect them to new nodes.

Methodology 1: Decommission HBase area servers by means of resizing

To decommission Hadoop nodes, directors can add decommission targets to HDFS’s and YARN’s exclude checklist, which had been dfs.hosts.exclude and yarn.nodes.exclude.xml. Nonetheless, Amazon EMR disallows guide replace to those recordsdata. The reason being that the Amazon EMR service daemon, grasp occasion controller, is the one legitimate course of to replace these two recordsdata on grasp nodes. Guide updates to those two recordsdata will likely be reset.

Thus, one of the accessible methods to lift a core node’s decommission precedence based on Amazon EMR is having much less occasion controller heartbeat.

As step one, move move_regions to the next script on Amazon S3, blog_HBase_graceful_decommission.sh, as an Amazon EMR step to maneuver HRegions to different area servers and shutdown processes of area server and occasion controller. Please additionally present targetRS and S3Path to blog_HBase_graceful_decommission.sh. targetRS represents to the personal DNS of the decommission goal area server. S3Path represents the placement of the area migration script.

This step must be run in off-peak hours. In spite of everything HRegions on the goal area server are moved to different nodes, splitting WAL actions after stopping the HBase area server will generate a really low workload to the cluster as a result of it serves 0 areas.

For extra data , seek advice from blog_HBase_graceful_decommission.sh.

Taking a more in-depth take a look at the move_regions choice in blog_HBase_graceful_decommission.sh, this script disables the area balancer and strikes the areas to different area servers. The script retrieves Safe Shell (SSH) credentials from AWS Secrets and techniques Supervisor to entry employee nodes.

As well as, the script included some AWS CLI operations. Please ensure that the occasion profile, EMR_EC2_DefaultRole, can function the next APIs and have SecretsManagaerReadWrite permission.

Amazon EMR APIs:

describe-cluster

list-instances

modify-instance-groups

Amazon S3 APIs:

Secrets and techniques Supervisor APIs:

In Amazon EMR 5.x, HBase on Amazon S3 will make the grasp node additionally work as a area server internet hosting hbase:meta areas. This script will get caught when attempting to maneuver non-hbase:meta HRegions to the grasp. To automate the script, the parameter, maxthreads, is elevated to maneuver areas by means of a number of threads. By shifting areas shortly loop, one of many threads acquired a runtime error as a result of it tries to maneuver non-hbase:meta HRegions to the grasp node. Different threads can carry on shifting HRegions to different area servers. After the one caught thread timed out after 300 seconds, it strikes ahead to the subsequent run. After six retries, guide actions will likely be required, equivalent to utilizing a transfer motion by means of the HBase shell for the remaining areas’ motion or resubmitting the step.

The next is the syntax to make use of the script to invoke the move_regions perform by means of blog_HBase_graceful_decommission.sh as an Amazon EMR step:

Step kind: Customized JAR

Identify: Transfer HRegions

JAR location :s3://.elasticmapreduce/libs/script-runner/script-runner.jar

Major class :None

Arguments :s3://yourbucket/your/step/location/blog_HBase_graceful_decommission.sh move_regions

Motion on failure:Proceed

Right here’s an Amazon EMR step instance to maneuver areas:

Step kind: Customized JAR

Identify: Transfer HRegions

JAR location :s3://us-west-2.elasticmapreduce/libs/script-runner/script-runner.jar

Major class :None

Arguments :s3://yourbucket/your/step/location/blog_HBase_graceful_decommission.sh move_regions your-secret-id ip-172-0-0-1.us-west-2.compute.inner s3://yourbucket/yourpath/

Motion on failure:Proceed

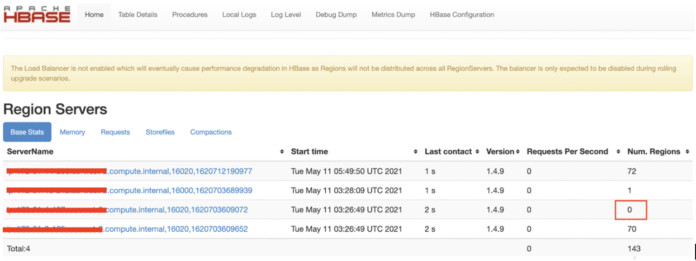

Within the HBase net UI, the goal area server will serve 0 areas after the evacuation, as proven within the following screenshot.

After that, the stop_RS_IC perform within the script stopped the HBase area server and occasion controller course of on the decommission goal after ensuring that there isn’t a working YARN container on that node.

Be aware that the script is for Amazon EMR 5.30.0 and later launch variations. For Amazon EMR 4.x-5.29.0 launch variations, stop_RS_IC within the script must be up to date by referring to How do I restart a service in Amazon EMR? Within the AWS Information Middle. Additionally, in Amazon EMR variations sooner than 5.30.0, Amazon EMR makes use of a service nanny to observe the standing of different processes. If a service nanny mechanically restarts the occasion controller, please cease the service nanny utilizing the stop_RS_IC perform earlier than stopping the occasion controller on that node. Right here’s an instance:

if ( “$runningContainers” -eq 0 ); then

echo “0 container is working on ${targetRS}” | tee -a /tmp/graceful_stop.log;

echo “Shutdown IC” | tee -a /tmp/graceful_stop.log;

sudo /and so forth/init.d/service-nanny cease | tee -a /tmp/graceful_stop.log;

sudo /and so forth/init.d/instance-controller cease | tee -a /tmp/graceful_stop.log;

sudo /and so forth/init.d/instance-controller standing | tee -a /tmp/graceful_stop.log;

else

echo “Nonetheless have ${runningContainers} containers working on ${targetRS}” | tee -a /tmp/graceful_stop.log;

echo “To not shutdown IC” | tee -a /tmp/graceful_stop.log;

fi

After the step is efficiently accomplished, scale in and outline (present core node quantity is −1) as the specified goal node quantity utilizing the Amazon EMR console. Amazon EMR would possibly choose up the goal core node to decommission it as a result of the occasion controller isn’t working on that node. There could be a couple of minutes of delay for Amazon EMR to detect the heartbeat lack of that focus on node by means of polling the occasion controller. Thus, ensure that the workload may be very low and there will likely be no container to the goal node for some time.

Stopping the occasion controller merely will increase the decommissioning precedence. However methodology 1 doesn’t assure that the goal core node will likely be picked up because the decommissioning goal by Amazon EMR. If Amazon EMR doesn’t choose up the decommission goal because the decommissioning sufferer after utilizing methodology 1, directors can cease the resize exercise utilizing the AWS Administration Console. Then, proceed to methodology 2.

Methodology 2: Manually decommission the goal core nodes

Directors can terminate the node utilizing the EC2InstanceIdsToTerminate choice within the modify-instance-groups API. However this motion will straight terminate the EC2 occasion and can threat shedding HDFS blocks. To mitigate the danger of getting a knowledge loss, directors can use the next steps in off-peak hours with zero or only a few working jobs.

First, run the move_hregions perform by means of blog_HBase_graceful_decommission.sh as an Amazon EMR step in methodology 1. The perform strikes HRegions to different area servers and stopped the HBase area server in addition to the occasion controller course of.

Then, run the terminate_ec2 perform in blog_HBase_graceful_decommission.sh as an Amazon EMR step. To run this perform efficiently, please present the goal occasion group ID and goal occasion ID to the script. This perform merely terminates one node at a time by specifying the EC2InstanceIdsToTerminate choice within the modify-instance-groups API. This makes positive that the core nodes aren’t terminated back-to-back and lowered the dangers of lacking HDFS blocks. It inspects HDFS and makes positive all HDFS blocks had at the least two copies. If an HDFS block have just one copy, the script will exit with an error message just like, “Some HDFS blocks have only one copy. Please improve HDFS replication issue by means of the next command for present HDFS blocks.”

$ hdfs dfs -setrep -R -w 2

To ensure all upcoming HDFS blocks have at the least two copies, reconfigure the core occasion group with the next software program configuration:

({

“classification”: “hdfs-site”,

“properties”: {

“dfs.replication”: “2”

},

“configurations”: ()

})

As well as, the terminateEC2 perform compares the metadata of the replicating blocks earlier than and after terminating the core node utilizing hdfs dfsadmin -report. This makes positive no under-replicating, corrupted, or lacking HDFS block elevated.

The terminateEC2 perform tracked decommission standing. The script will full after the decommission completes. It could actually take a while to get better HDFS blocks. The elapsed time depends upon a number of components equivalent to the whole variety of blocks, I/O, bandwidth, HDFS handler quantity, and title node assets. If there are numerous HDFS blocks to be recovered, it might take just a few hours to finish. Earlier than working the script, please make it possible for the occasion profile, EMR_EC2_DefaultRole, have permission of elasticmapreduce:ModifyInstanceGroups.

The next is the syntax to make use of the script to invoke the terminate_ec2 perform by means of blog_HBase_graceful_decommission.sh as an Amazon EMR step:

Step kind: Customized JAR

Identify: Terminate EC2

JAR location :s3://.elasticmapreduce/libs/script-runner/script-runner.jar

Major class :None

Arguments :s3://yourbucket/your/step/location/blog_HBase_graceful_decommission.sh terminate_ec2

Motion on failure:Proceed

Right here’s an Amazon EMR step instance to maneuver areas:

Step kind: Customized JAR

Identify: Terminate EC2

JAR location :s3://us-west-2.elasticmapreduce/libs/script-runner/script-runner.jar

Major class :None

Arguments :s3://yourbucket/your/step/location/blog_HBase_graceful_decommission.sh terminate_ec2 your-secret-id ig-ABCDEFGH12345 i-1234567890abcdef

Motion on failure:Proceed

Whereas invoking terminate_ec2, the script checks HDFS Identify Node Net UI for the decommission goal to grasp what number of blocks have to be recovered on different nodes after submitting the decommission request. Listed here are the steps:

On the Amazon EMR console, model 6.x, discover HDFS NameNode net UI. For instance, enter http://:9870

On the highest menu bar, select Datanodes

Within the In operation part, examine the on-service knowledge nodes and the whole variety of knowledge blocks on the nodes, as proven within the following screenshot.

To view the HDFS decommissioning progress, go to Overview, as proven within the following screenshot.

On the Datanodes web page, the decommission goal node won’t have a inexperienced checkmark, and the node will likely be within the Decommissioning part, as proven within the following screenshot.

The step’s STDOUT additionally reveals the decommission standing:

Hostname: ip-172-31-4-197.us-west-2.compute.inner

Decommission Standing : Decommission in progress

The decommission goal will transit from Decommissioning to Decommissioned within the HDFS NameNode net UI, as proven within the following screenshot.

The decommissioned goal will seem within the Lifeless datanodes part within the step’s STDOUT after the method is accomplished:

Lifeless datanodes (1):

Identify: 172.31.4.197:50010 (ip-172-31-4-197.us-west-2.compute.inner)

Hostname: ip-172-31-4-197.us-west-2.compute.inner

Decommission Standing : Decommissioned

Configured Capability: 62245027840 (57.97 GB)

DFS Used: 394412032 (376.14 MB)

Non DFS Used: 0 (0 B)

DFS Remaining: 61179640063 (56.98 GB)

DFS Used%: 0.63%

DFS Remaining%: 98.29%

Configured Cache Capability: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 0

Final contact: Tue Jan 14 06:09:17 UTC 2025

After the goal node is decommissioned, the hdfs dfsadmin report will likely be displayed within the final part within the step’s STDOUT . There must be no distinction between rep_blocks_${beforeDate} and rep_blocks_${afterDate} as described within the script. It means no further quantity of under-replicated, lacking, or corrupt blocks after the decommission. In HBase net UI, the decommissioned area server will likely be moved to useless area servers. The useless area server information will likely be reset after restarting HMaster throughout routine upkeep.

After the Amazon EMR step is accomplished with out errors, please repeat the previous steps to decommission the subsequent goal core node as a result of directors might have a couple of core nodes to decommission.

After directors full all decommission duties, directors can manually allow the HBase balancer by means of the HBase shell once more:

$ echo “balance_switch true” | sudo -u hbase hbase shell

## To ensure balance_switch is enabled, submit the identical command once more. The output ought to say it’s already in “true” standing.

$ echo “balance_switch true” | sudo -u hbase hbase shell

Stop Amazon EMR from provisioning HBase area servers on job nodes

For brand new clusters, configure HBase settings for grasp and core teams solely and maintain the HBase settings empty when launching an Amazon EMR HBase on an S3 cluster. This prevents provisioning HBase area servers on job nodes.

For instance, outline configurations for functions aside from HBase settings within the software program configuration textbox within the Software program settings part on the Amazon EMR console, as proven within the following screenshot.

Then, configure HBase settings in Node configuration – non-compulsory for every occasion group within the Cluster configuration – required part, as proven within the following screenshot.

For grasp and core occasion teams, HBase configurations will likely be like the next screenshot.

Right here’s a json formatted instance:

(

{

“Classification”: “hbase”,

“Properties”: {

“hbase.emr.storageMode”: “s3”

}

},

{

“Classification”: “hbase-site”,

“Properties”: {

“hbase.rootdir”: “s3://my/HBase/on/S3/RootDir/”

}

}

)

For job occasion teams, there will likely be no HBase configuration, as proven within the following screenshot.

Right here’s a json formatted instance:

Right here’s an instance in AWS CLI:

$ aws emr create-cluster

–applications Identify=Hadoop Identify=HBase Identify=ZooKeeper

… (skip)

–instance-groups ‘( {“InstanceCount”:1,”EbsConfiguration”:{“EbsBlockDeviceConfigs”:({“VolumeSpecification”:{“SizeInGB”:32,”VolumeType”:”gp2″},”VolumesPerInstance”:2})},”InstanceGroupType”:”MASTER”,”InstanceType”:”m5.xlarge”,”Configurations”:({“Classification”:”hbase”,”Properties”:{“hbase.emr.storageMode”:”s3″}},{“Classification”:”hbase-site”,”Properties”:{“hbase.rootdir”:”s3://my/HBase/on/S3/RootDir/”}}),”Identify”:”Grasp – 1″},

{“InstanceCount”:1,”EbsConfiguration”:{“EbsBlockDeviceConfigs”:({“VolumeSpecification”:{“SizeInGB”:32,”VolumeType”:”gp2″},”VolumesPerInstance”:2})},”InstanceGroupType”:”TASK”,”InstanceType”:”m5.xlarge”,”Identify”:”Activity – 3″},

{“InstanceCount”:2,”EbsConfiguration”:{“EbsBlockDeviceConfigs”:({“VolumeSpecification”:{“SizeInGB”:64,”VolumeType”:”gp2″},”VolumesPerInstance”:1}),”EbsOptimized”:true},”InstanceGroupType”:”CORE”,”InstanceType”:”m5.2xlarge”,”Configurations”:({“Classification”:”hbase”,”Properties”:{“hbase.emr.storageMode”:”s3″}},{“Classification”:”hbase-site”,”Properties”:{“hbase.rootdir”:”s3://my/HBase/on/S3/RootDir/”}}),”Identify”:”Core – 2″})’ –configurations ‘({“Classification”:”hdfs-site”,”Properties”:{“dfs.replication”:”2″}})’

–auto-scaling-role Amazon EMR_AutoScaling_DefaultRole

… (skip)

–scale-down-behavior TERMINATE_AT_TASK_COMPLETION –region us-west-2

Cease decommission the HBase area servers on job nodes

For an present Amazon EMR HBase on an S3 cluster, move stop_and_check_task_rs to blog_HBase_graceful_decommission.sh as an Amazon EMR step to cease HBase area servers on nodes in a job occasion group. The script requirs a job occasion group ID and an S3 location to position sharing scripts for job nodes.

The next is the syntax to move stop_and_check_task_rs to blog_HBase_graceful_decommission.sh as an Amazon EMR step:

Step kind: Customized JAR

Identify: Cease Hbase Area servers on Activity Nodes

JAR location: s3://.elasticmapreduce/libs/script-runner/script-runner.jar

Arguments: s3://yourbucket/your/step/location/blog_HBase_graceful_decommission.sh stop_and_check_task_rs

Motion on failure:Proceed

Right here’s an Amazon EMR step instance to cease HBase areas on nodes in a job group:

Step kind: Customized JAR

Identify: Cease Hbase Area servers on Activity Nodes

JAR location :s3://us-west-2.elasticmapreduce/libs/script-runner/script-runner.jar

Major class :None

Arguments :s3://yourbucket/your/step/location/ blog_HBase_graceful_decommission.sh your-secret-id stop_and_check_task_rs ig-ABCDEFGH12345 s3://yourbucket/yourpath/

Motion on failure:Proceed

This step above not solely stops HBase area servers on present job nodes. To keep away from provisioning HBase area servers on new job nodes, the script additionally reconfigures and scales within the job group. Listed here are the steps:

Utilizing the move_regions perform, in blog_HBase_graceful_decommission.sh, transfer HRegions on the duty group to different nodes and cease area servers on these job nodes.

After ensuring that the HBase area servers are stopped at these job nodes, the script reconfigures the duty occasion group. The reconfiguration particulars are to let HBase rootdir level to a non-existing location. These settings solely apply to the duty group. Right here’s an instance:

(

{

“Classification”: “hbase-site”,

“Properties”: {

“hbase.rootdir”: “hdfs://non/present/location”

}

},

{

“Classification”: “hbase”,

“Properties”: {

“hbase.emr.storageMode”: “hdfs”

}

}

)

When the duty group’s state returns to RUNNING, the script scales in these job nodes to 0. New job nodes within the upcoming scaling out occasions won’t run HBase area servers.

Conclusion

These scaling steps display methods to deal with Amazon EMR HBase scaling gracefully. The capabilities within the script may also help directors to resolve issues when corporations wish to gracefully scale the Amazon EMR HBase on S3 clusters with out Amazon EMR WAL.

You probably have an analogous request to scale in an Amazon EMR HBase on an S3 cluster gracefully as a result of the cluster doesn’t allow Amazon EMR WAL, you may seek advice from this submit. Please take a look at the steps within the testing setting for verifications first. After you verify the steps can meet your manufacturing necessities, you may proceed and apply the steps to manufacturing setting.

In regards to the Authors

Yu-Ting Su is a Sr. Hadoop Programs Engineer at Amazon Net Companies (AWS). Her experience is in Amazon EMR and Amazon OpenSearch Service. She’s keen about distributing computation and serving to folks to deliver their concepts to life.

Yu-Ting Su is a Sr. Hadoop Programs Engineer at Amazon Net Companies (AWS). Her experience is in Amazon EMR and Amazon OpenSearch Service. She’s keen about distributing computation and serving to folks to deliver their concepts to life.

Hsing-Han Wang is a Cloud Assist Engineer at Amazon Net Companies (AWS). He focuses on Amazon EMR and AWS Lambda. Exterior of labor, he enjoys mountaineering and jogging, and he’s additionally an Eorzean.

Hsing-Han Wang is a Cloud Assist Engineer at Amazon Net Companies (AWS). He focuses on Amazon EMR and AWS Lambda. Exterior of labor, he enjoys mountaineering and jogging, and he’s additionally an Eorzean.

Cheng Wang is a Technical Account Supervisor at AWS who has over 10 years of trade expertise, specializing in enterprise service assist, knowledge evaluation, and enterprise intelligence options.

Cheng Wang is a Technical Account Supervisor at AWS who has over 10 years of trade expertise, specializing in enterprise service assist, knowledge evaluation, and enterprise intelligence options.

Chris Li is an Enterprise Assist supervisor at AWS. He leads a staff of Technical Account Managers to resolve advanced buyer issues and implement well-structured options.

Chris Li is an Enterprise Assist supervisor at AWS. He leads a staff of Technical Account Managers to resolve advanced buyer issues and implement well-structured options.