Now you can entry the AI search circulate builder on OpenSearch 2.19+ domains with Amazon OpenSearch Service and start innovating AI search functions sooner. Via a visible designer, you possibly can configure customized AI search flows—a sequence of AI-driven information enrichments carried out throughout ingestion and search. You’ll be able to construct and run these AI search flows on OpenSearch to energy AI search functions on OpenSearch with out you having to construct and preserve customized middleware.

Functions are more and more utilizing AI and search to reinvent and enhance consumer interactions, content material discovery, and automation to uplift enterprise outcomes. These improvements run AI search flows to uncover related info by way of semantic, cross-language, and content material understanding; adapt info rating to particular person behaviors; and allow guided conversations to pinpoint solutions. Nonetheless, serps are restricted in native AI-enhanced search assist, so builders develop middleware to enrich serps to fill in practical gaps. This middleware consists of customized code that runs information flows to sew information transformations, search queries, and AI enrichments in various mixtures tailor-made to make use of circumstances, datasets, and necessities.

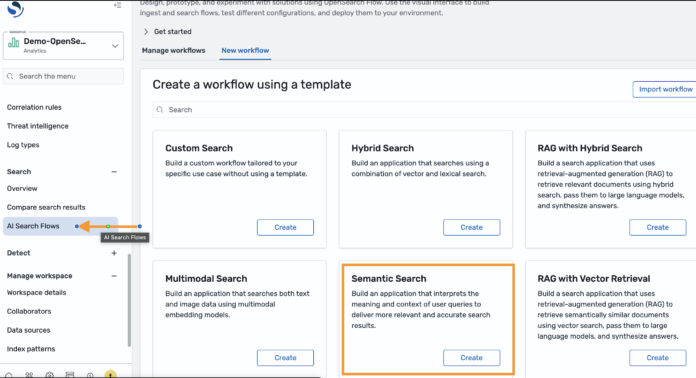

With the brand new AI search circulate builder for OpenSearch, you’ve gotten a collaborative surroundings to design and run AI search flows on OpenSearch. You will discover the visible designer inside OpenSearch Dashboards below AI Search Flows, and get began rapidly by launching preconfigured circulate templates for common use circumstances like semantic, multimodal or hybrid search, and retrieval augmented technology (RAG). Via configurations, you possibly can create customise flows to counterpoint search and index processes by way of AI suppliers like Amazon Bedrock, Amazon SageMaker, Amazon Comprehend, OpenAI, DeepSeek, and Cohere. Flows could be programmatically exported, deployed, and scaled on any OpenSearch 2.19+ cluster by way of OpenSearch’s current ingest, index, workflow and search APIs.

Within the the rest of the put up, we’ll stroll by way of a few situations to display the circulate builder. First, we’ll allow semantic search in your outdated keyword-based OpenSearch utility with out client-side code modifications. Subsequent, we’ll create a multi-modal RAG circulate, to showcase how one can redefine picture discovery inside your functions.

AI search circulate builder key ideas

Earlier than we get began, let’s cowl some key ideas. You should utilize the circulate builder by way of APIs or a visible designer. The visible designer is really useful for serving to you handle workflow tasks. Every challenge incorporates at the very least one ingest or search circulate. Flows are a pipeline of processor assets. Every processor applies a kind of information rework resembling encoding textual content into vector embeddings, or summarizing search outcomes with a chatbot AI service.

Ingest flows are created to counterpoint information because it’s added to an index. They encompass:

A knowledge pattern of the paperwork you need to index.

A pipeline of processors that apply transforms on ingested paperwork.

An index constructed from the processed paperwork.

Search flows are created to dynamically enrich search request and outcomes. They encompass:

A question interface primarily based on the search API, defining how the circulate is queried and ran.

A pipeline of processors that rework the request context or search outcomes.

Usually, the trail from prototype to manufacturing begins with deploying your AI connectors, designing flows from a knowledge pattern, then exporting your flows from a growth cluster to a preproduction surroundings for testing at-scale.

Situation 1: Allow semantic search on an OpenSearch utility with out client-side code modifications

On this situation, we now have a product catalog that was constructed on OpenSearch a decade in the past. We goal to enhance its search high quality, and in flip, uplift purchases. The catalog has search high quality points, as an illustration, a seek for “NBA,” doesn’t floor basketball merchandise. The applying can be untouched for a decade, so we goal to keep away from modifications to client-side code to cut back threat and implementation effort.

An answer requires the next:

An ingest circulate to generate textual content embeddings (vectors) from textual content in an current index.

A search circulate that encodes search phrases into textual content embeddings, and dynamically rewrites keyword-type match queries right into a k-NN (vector) question to run a semantic search on the encoded phrases. The rewrite permits your utility to transparently run semantic-type queries by way of keyword-type queries.

We can even consider a second-stage reranking circulate, which makes use of a cross-encoder to rerank outcomes as it might probably increase search high quality.

We’ll accomplish our job by way of the circulate builder. We start by navigating to AI Search Flows within the OpenSearch Dashboard, and deciding on Semantic Search from the template catalog.

This template requires us to pick a textual content embedding mannequin. We’ll use Amazon Bedrock Titan Textual content, which was deployed as a prerequisite. As soon as the template is configured, we enter the designer’s essential interface. From the preview, we will see that the template consists of a preset ingestion and search circulate.

The ingest circulate requires us to supply a knowledge pattern. Our product catalog is at the moment served by an index containing the Amazon product dataset, so we import a knowledge pattern from this index.

The ingest circulate features a ML Inference Ingest Processor, which generates machine studying (ML) mannequin outputs resembling embeddings (vectors) as your information is ingested into OpenSearch. As beforehand configured, the processor is ready to make use of Amazon Titan Textual content to generate textual content embeddings. We map the info subject that holds our product descriptions to the mannequin’s inputText subject to allow embedding technology.

We are able to now run our ingest circulate, which builds a brand new index containing our information pattern embeddings. We are able to examine the index’s contents to substantiate that the embeddings have been efficiently generated.

As soon as we now have an index, we will configure our search circulate. We’ll begin with updating the question interface, which is preset to a primary match question. The placeholder my_text needs to be changed with the product descriptions. With this replace, our search circulate can now reply to queries from our legacy utility.

The search circulate consists of an ML Inference Search Processor. As beforehand configured, it’s set to make use of Amazon Titan Textual content. Because it’s added below Remodel question, it’s utilized to question requests. On this case, it is going to rework search phrases into textual content embeddings (a question vector). The designer lists the variables from the question interface, permitting us to map the search phrases (question.match.textual content.question), to the mannequin’s inputText subject. Textual content embeddings will now be generated from the search phrases every time our index is queried.

Subsequent, we replace the question rewrite configurations, which is preset to rewrite the match question right into a k-NN question. We change the placeholder my_embedding with the question subject assigned to your embeddings. Be aware that we may rewrite this to a different question sort, together with a hybrid question, which can enhance search high quality.

Let’s evaluate our semantic and key phrase options from the search comparability instrument. Each options are capable of finding basketball merchandise after we seek for “basketball.”

However what occurs if we seek for “NBA?” Solely our semantic search circulate returns outcomes as a result of it detects the semantic similarities between “NBA” and “basketball.”

We’ve managed enhancements, however we would have the ability to do higher. Let’s see if reranking our search outcomes with a cross-encoder helps. We’ll add a ML Inference Search Processor below Remodel response, in order that the processor applies to look outcomes, and choose Cohere Rerank. From the designer, we see that Cohere Rerank requires a listing of paperwork and the question context as enter. Information transformations are wanted to bundle the search outcomes right into a format that may be processed by Cohere Rerank. So, we apply JSONPath expressions to extract the question context, flatten information constructions, and pack the product descriptions from our paperwork into a listing.

Let’s return to the search comparability instrument to match our circulate variations. We don’t observe any significant distinction in our earlier seek for “basketball” and “NBA.” Nonetheless, enhancements are noticed after we search, “sizzling climate.” On the best, we see that the second and fifth search hit moved 32 and 62 spots up, and returned “sandals” which are effectively suited to “sizzling climate.”

We’re able to proceed to manufacturing, so we export our flows from our growth cluster into our preproduction surroundings, use the workflow APIs to combine our flows into automations, and scale our check processes by way of the majority, ingest and search APIs.

Situation 2: Use generative AI to redefine and elevate picture search

On this situation, we now have images of hundreds of thousands of vogue designs. We’re in search of a low-maintenance picture search answer. We’ll use generative multimodal AI to modernize picture search, eliminating the necessity for labor to take care of picture tags and different metadata.

Our answer requires the next:

An ingest circulate which makes use of a multimodal mannequin like Amazon Titan Multimodal Embeddings G1 to generate picture embeddings.

A search circulate which generates textual content embeddings with a multimodal mannequin, runs a k-NN question for textual content to picture matching, and sends matching pictures to a generative mannequin like Anthropic’s Claude Sonnet 3.7 that may function on textual content and pictures.

We’ll begin from the RAG with Vector Retrieval template. With this template, we will rapidly configure a primary RAG circulate. The template requires an embedding and enormous language mannequin (LLM) that may course of textual content and picture content material. We use Amazon Bedrock Titan Multimodal G1 and Anthropic’s Claude Sonnet 3.7, respectively.

From the designer’s preview panel, we will see similarities between this template and the semantic search template. Once more, we seed the ingest circulate with a knowledge pattern. Just like the earlier instance, we use the Amazon product dataset besides we change the manufacturing descriptions with base64 encoded pictures as a result of our fashions require base64 pictures, and this answer doesn’t require textual content. We map the base64 picture information to the corresponding Amazon Titan G1 inputs to generate embeddings. We then run our ingest circulate and make sure that our index incorporates base64 pictures and corresponding embeddings.

The preliminary steps for configuring this search circulate are much like the earlier situation: we replace the question interface, map the question textual content fields to the mannequin inputs for the ML Inference Search Processor, and revise the question rewrite settings. The primary distinction with this circulate is the extra response processor set to make use of Anthropic’s Claude Sonnet 3.7 to course of pictures.

We have to configure an LLM immediate that features the question context and directions for the LLM to play the function of a vogue advisor, and supply commentary in regards to the picture payload.

Subsequent, we map the immediate and the base64 picture information subject to the mannequin’s inputs accordingly.

Let’s check our multimodal RAG circulate by trying to find “sundown coloured attire.” We observe the next outcomes.

This stunning gown completely captures the essence of a sundown with its beautiful ombre shade gradient. Beginning with a delicate peachy pink on the prime, it transitions right into a vibrant orange within the center, and finishes with a vivid yellow on the hem – identical to the colours of the sky because the solar dips under the horizon. The gown encompasses a easy, informal silhouette with brief cap sleeves and seems to have a cushty, relaxed match that might flatter many physique varieties. The tie-dye impact offers it a bohemian, carefree vibe that’s excellent for summer time outings, seashore holidays, or informal night occasions. I like to recommend this sunset-colored gown as a result of it’s eye-catching, versatile, and embodies that magical sundown glow that may brighten any event. The colours would complement heat pores and skin tones significantly effectively, and you could possibly simply gown it up with gold equipment or preserve it informal with easy sandals.

This vibrant tie-dye gown completely captures the essence of a sundown with its stunning gradient of colours. The gown options bands of golden yellow, fiery orange, and wealthy pink that mix collectively seamlessly, paying homage to the sky at nightfall. The strapless design showcases the shoulders elegantly, whereas the gathered waist creates a flattering silhouette that flows into a cushty, knee-length skirt. This gown could be a superb selection for summer time occasions, seashore holidays, or informal outings. The sundown shade palette isn’t solely on-trend but in addition versatile sufficient to pair with impartial equipment. I like to recommend this piece for its eye-catching colours, snug match, and the best way it embodies the nice and cozy, relaxed feeling of watching a wonderful sundown.

With none picture metadata, OpenSearch finds pictures of sunset-colored attire, and responds with correct and colourful commentary.

Conclusion

The AI search circulate builder is out there in all AWS Areas that assist OpenSearch 2.19+ on OpenSearch Service. To study extra, consult with Constructing AI search workflows in OpenSearch Dashboards, and the accessible tutorials on GitHub, which display combine varied AI fashions from Amazon Bedrock, SageMaker, and different AWS and third-party AI providers.

In regards to the authors

Dylan Tong is a Senior Product Supervisor at Amazon Net Companies. He leads the product initiatives for AI and machine studying (ML) on OpenSearch together with OpenSearch’s vector database capabilities. Dylan has a long time of expertise working instantly with prospects and creating merchandise and options within the database, analytics and AI/ML area. Dylan holds a BSc and MEng diploma in Pc Science from Cornell College.

Dylan Tong is a Senior Product Supervisor at Amazon Net Companies. He leads the product initiatives for AI and machine studying (ML) on OpenSearch together with OpenSearch’s vector database capabilities. Dylan has a long time of expertise working instantly with prospects and creating merchandise and options within the database, analytics and AI/ML area. Dylan holds a BSc and MEng diploma in Pc Science from Cornell College.

Tyler Ohlsen is a software program engineer at Amazon Net Companies focusing totally on the OpenSearch Anomaly Detection and Movement Framework plugins.

Tyler Ohlsen is a software program engineer at Amazon Net Companies focusing totally on the OpenSearch Anomaly Detection and Movement Framework plugins.

Mingshi Liu is a Machine Studying Engineer at OpenSearch, primarily contributing to OpenSearch, ML Commons and Search Processors repo. Her work focuses on creating and integrating machine studying options for search applied sciences and different open-source tasks.

Mingshi Liu is a Machine Studying Engineer at OpenSearch, primarily contributing to OpenSearch, ML Commons and Search Processors repo. Her work focuses on creating and integrating machine studying options for search applied sciences and different open-source tasks.

Ka Ming Leung (Ming) is a Senior UX designer at OpenSearch, specializing in ML-powered search developer experiences in addition to designing observability and cluster administration options.

Ka Ming Leung (Ming) is a Senior UX designer at OpenSearch, specializing in ML-powered search developer experiences in addition to designing observability and cluster administration options.