In 2023, one in style perspective on AI went like this: Positive, it may possibly generate a number of spectacular textual content, however it may possibly’t actually cause — it’s all shallow mimicry, simply “stochastic parrots” squawking.

On the time, it was straightforward to see the place this attitude was coming from. Synthetic intelligence had moments of being spectacular and fascinating, but it surely additionally constantly failed fundamental duties. Tech CEOs mentioned they might simply hold making the fashions larger and higher, however tech CEOs say issues like that on a regular basis, together with when, behind the scenes, every little thing is held along with glue, duct tape, and low-wage staff.

It’s now 2025. I nonetheless hear this dismissive perspective quite a bit, notably once I’m speaking to lecturers in linguistics and philosophy. Lots of the highest profile efforts to pop the AI bubble — just like the latest Apple paper purporting to seek out that AIs can’t actually cause — linger on the declare that the fashions are simply bullshit mills that aren’t getting a lot better and received’t get a lot better.

However I more and more suppose that repeating these claims is doing our readers a disservice, and that the tutorial world is failing to step up and grapple with AI’s most necessary implications.

I do know that’s a daring declare. So let me again it up.

“The phantasm of considering’s” phantasm of relevance

The moment the Apple paper was posted on-line (it hasn’t but been peer reviewed), it took off. Movies explaining it racked up hundreds of thousands of views. Individuals who might not usually learn a lot about AI heard concerning the Apple paper. And whereas the paper itself acknowledged that AI efficiency on “reasonable issue” duties was enhancing, many summaries of its takeaways centered on the headline declare of “a elementary scaling limitation within the considering capabilities of present reasoning fashions.”

For a lot of the viewers, the paper confirmed one thing they badly wished to consider: that generative AI doesn’t actually work — and that’s one thing that received’t change any time quickly.

The paper appears on the efficiency of contemporary, top-tier language fashions on “reasoning duties” — mainly, sophisticated puzzles. Previous a sure level, that efficiency turns into horrible, which the authors say demonstrates the fashions haven’t developed true planning and problem-solving abilities. “These fashions fail to develop generalizable problem-solving capabilities for planning duties, with efficiency collapsing to zero past a sure complexity threshold,” because the authors write.

That was the topline conclusion many individuals took from the paper and the broader dialogue round it. However in case you dig into the main points, you’ll see that this discovering is no surprise, and it doesn’t truly say that a lot about AI.

A lot of the explanation why the fashions fail on the given downside within the paper is just not as a result of they’ll’t resolve it, however as a result of they’ll’t specific their solutions within the particular format the authors selected to require.

For those who ask them to jot down a program that outputs the right reply, they accomplish that effortlessly. Against this, in case you ask them to offer the reply in textual content, line by line, they ultimately attain their limits.

That looks like an fascinating limitation to present AI fashions, but it surely doesn’t have quite a bit to do with “generalizable problem-solving capabilities” or “planning duties.”

Think about somebody arguing that people can’t “actually” do “generalizable” multiplication as a result of whereas we will calculate 2-digit multiplication issues with no downside, most of us will screw up someplace alongside the best way if we’re making an attempt to do 10-digit multiplication issues in our heads. The problem isn’t that we “aren’t basic reasoners.” It’s that we’re not advanced to juggle giant numbers in our heads, largely as a result of we by no means wanted to take action.

If the explanation we care about “whether or not AIs cause” is basically philosophical, then exploring at what level issues get too lengthy for them to resolve is related, as a philosophical argument. However I believe that most individuals care about what AI can and can’t do for much extra sensible causes.

AI is taking your job, whether or not it may possibly “actually cause” or not

I totally count on my job to be automated within the subsequent few years. I don’t need that to occur, clearly. However I can see the writing on the wall. I usually ask the AIs to jot down this article — simply to see the place the competitors is at. It’s not there but, but it surely’s getting higher on a regular basis.

Employers are doing that too. Entry-level hiring in professions like regulation, the place entry-level duties are AI-automatable, seems to be already contracting. The job marketplace for latest faculty graduates appears ugly.

The optimistic case round what’s occurring goes one thing like this: “Positive, AI will get rid of a number of jobs, but it surely’ll create much more new jobs.” That extra optimistic transition may effectively occur — although I don’t wish to depend on it — however it will nonetheless imply lots of people abruptly discovering all of their abilities and coaching immediately ineffective, and due to this fact needing to quickly develop a very new talent set.

It’s this risk, I believe, that looms giant for many individuals in industries like mine, that are already seeing AI replacements creep in. It’s exactly as a result of this prospect is so scary that declarations that AIs are simply “stochastic parrots” that may’t actually suppose are so interesting. We wish to hear that our jobs are secure and the AIs are a nothingburger.

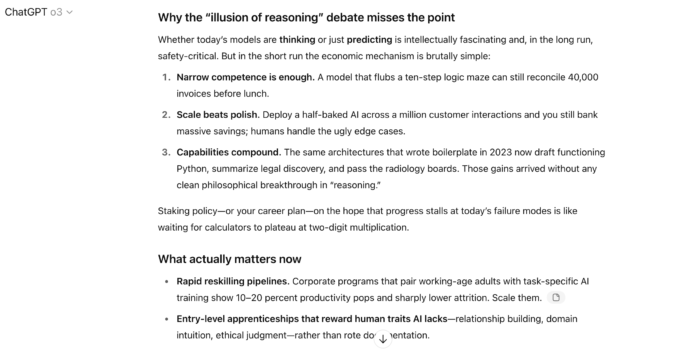

However actually, you’ll be able to’t reply the query of whether or not AI will take your job as regards to a thought experiment, or as regards to the way it performs when requested to jot down down all of the steps of Tower of Hanoi puzzles. The best way to reply the query of whether or not AI will take your job is to ask it to strive. And, uh, right here’s what I bought once I requested ChatGPT to jot down this part of this article:

Is it “actually reasoning”? Possibly not. But it surely doesn’t have to be to render me probably unemployable.

“Whether or not or not they’re simulating considering has no bearing on whether or not or not the machines are able to rearranging the world for higher or worse,” Cambridge professor of AI philosophy and governance Harry Legislation argued in a latest piece, and I believe he’s unambiguously proper. If Vox palms me a pink slip, I don’t suppose I’ll get anyplace if I argue that I shouldn’t get replaced as a result of o3, above, can’t resolve a sufficiently sophisticated Towers of Hanoi puzzle — which, guess what, I can’t do both.

Critics are making themselves irrelevant once we want them most

In his piece, Legislation surveys the state of AI criticisms and finds it pretty grim. “Numerous latest crucial writing about AI…learn like extraordinarily wishful desirous about what precisely techniques can and can’t do.”

That is my expertise, too. Critics are sometimes trapped in 2023, giving accounts of what AI can and can’t try this haven’t been appropriate for 2 years. “Many (lecturers) dislike AI, so that they don’t observe it carefully,” Legislation argues. “They don’t observe it carefully so that they nonetheless suppose that the criticisms of 2023 maintain water. They don’t. And that’s regrettable as a result of lecturers have necessary contributions to make.”

However in fact, for the employment results of AI — and within the longer run, for the worldwide catastrophic threat considerations they could current — what issues isn’t whether or not AIs may be induced to make foolish errors, however what they’ll do when arrange for fulfillment.

I’ve my very own checklist of “straightforward” issues AIs nonetheless can’t resolve — they’re fairly dangerous at chess puzzles — however I don’t suppose that type of work must be bought to the general public as a glimpse of the “actual reality” about AI. And it undoubtedly doesn’t debunk the actually fairly scary future that specialists more and more consider we’re headed towards.

A model of this story initially appeared within the Future Good publication. Enroll right here!

You’ve learn 1 article within the final month

Right here at Vox, we’re unwavering in our dedication to overlaying the problems that matter most to you — threats to democracy, immigration, reproductive rights, the atmosphere, and the rising polarization throughout this nation.

Our mission is to offer clear, accessible journalism that empowers you to remain knowledgeable and engaged in shaping our world. By changing into a Vox Member, you straight strengthen our capability to ship in-depth, impartial reporting that drives significant change.

We depend on readers such as you — be a part of us.

Swati Sharma

Vox Editor-in-Chief