Knowledge lake architectures assist organizations offload knowledge from premium storage programs with out shedding the power to question and analyze the information. This structure might be helpful for geospatial knowledge, the place builders may need terabytes of sometimes accessed knowledge of their databases that they need to cost-effectively preserve. Nevertheless, this requires for his or her knowledge lake question engine to assist geographic info programs (GIS) knowledge sorts and features.

Amazon Redshift helps querying spatial knowledge, together with the GEOMETRY and GEOGRAPHY knowledge sorts and features which can be utilized in querying GIS programs. Moreover, Amazon Redshift allows you to question geospatial knowledge each in your knowledge lakes on Amazon S3 and your Redshift knowledge warehouse, providing you with the selection of how one can entry your knowledge. Moreover, AWS Lake Formation and assist for AWS Identification and Entry Administration (IAM) in Esri’s ArcGIS Professional provides you a solution to securely bridge knowledge between your geospatial knowledge lakes and map visualization instruments. You may arrange, handle, and safe geospatial knowledge lakes within the cloud with a couple of clicks.

On this submit, we stroll by find out how to arrange a geospatial knowledge lake utilizing Lake Formation and question the information with ArcGIS Professional utilizing Amazon Redshift Serverless.

Answer overview

In our instance, a county public well being division has used Lake Formation to safe their knowledge lake that accommodates public well being info (PHI) knowledge. Epidemiologists inside the county need to create a map for the clinics offering vaccination for his or her communities. The county’s GIS analysts want entry to the information lake to create the required maps with out having the ability to entry the PHI knowledge.

This resolution makes use of Lake Formation tags to permit column-level entry within the database to the general public info that features the clinic names, addresses, zip codes, and longitude/latitude coordinates with out permitting entry to the PHI knowledge inside the identical tables. We use Redshift Serverless and Amazon Redshift Spectrum to entry this knowledge from ArcGIS Professional, a GIS mapping software program from Esri, an AWS Associate.

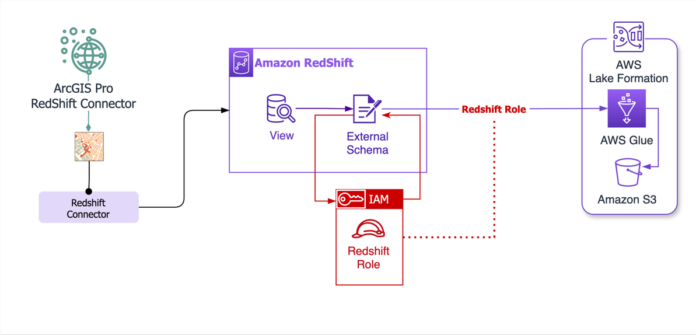

The next diagram reveals the structure for this resolution.

The next is a pattern schema for this submit.

Description

Column Identify

Geoproperty Tag

Affected person ID

patient_id

No

Clinic ID

clinic_id

Sure

Tackle of Clinic

clinic_address

Sure

Clinic Zip Code

clinic_zip

Sure

Clinic Metropolis

clinic_city

Sure

First Identify Affected person

first_name

No

Final Identify Affected person

last_name

No

Affected person Tackle

patient_address

No

Affected person Zip Code

patient_zip

No

Vaccination Kind

vaccination_type

No

Latitude of Clinic

clinic_lat

Sure

Longitude of Clinic

clinic_long

Sure

Within the following sections, we stroll by the steps to arrange the answer:

Deploy the answer infrastructure utilizing AWS CloudFormation.

Add a CSV with pattern knowledge to an Amazon Easy Storage Service (Amazon S3) bucket and run an AWS Glue crawler to crawl the information.

Arrange Lake Formation permissions.

Configure the Amazon Redshift Question Editor v2.

Arrange the schemas in Amazon Redshift.

Create a view in Amazon Redshift.

Create a neighborhood database consumer in ArcGIS Professional.

Join ArcGIS Professional to the Redshift database.

Stipulations

It’s best to have the next stipulations:

Arrange the infrastructure with AWS CloudFormation

To create the surroundings for the demo, full the next steps:

Log in to the AWS Administration Console as an AWS account administrator and a Lake Formation knowledge lake administrator—this account must be each an account admin and a knowledge lake admin for the template to finish.

Open the AWS CloudFormation console

Select Launch Stack.

The CloudFormation template creates the next elements:

S3 bucket – samp-clinic-db-{ACCOUNT_ID}

AWS Glue database – samp-clinical-glue-db

AWS Glue crawler – samp-glue-crawler

Redshift Serverless workgroup – samp-clinical-rs-wg

Redshift Serverless namespace – samp-clinical-rs-ns

IAM function for Amazon Redshift – demo-RedshiftIAMRole-{UNIQUE_ID}

IAM function for AWS Glue – samp-clinical-glue-role

Lake Formation tag – geoproperty

Add a CSV to the S3 bucket and run the AWS Glue crawler

The subsequent step is to create a knowledge lake in our demo surroundings after which use an AWS Glue crawler to populate the AWS Glue database and replace the schema and metadata within the AWS Glue Knowledge Catalog.

The CloudFormation stack created the S3 bucket we’ll use in addition to the AWS Glue database and crawler. Now we have supplied a fictious check dataset that can characterize the affected person and scientific info. Obtain the file and full the next steps:

On the AWS CloudFormation console, open the stack you simply launched.

On the Sources tab, select the hyperlink to the S3 bucket.

Select Add and add the CSV file (data-with-geocode.csv), then select Add.

On the AWS Glue console, select Crawlers within the navigation pane.

Choose the crawler you created with the CloudFormation stack and select Run.

The crawler run ought to solely take a minute to finish, and can populate a desk named clinic-sample-s3_ACCOUNT_ID with a fictious dataset.

Select Tables within the navigation pane and open the desk the crawler populated.

You will note that the dataset accommodates fields that comprise PHI and personally identifiable info (PII).

We now have a database arrange and the Knowledge Catalog populated with the schema and metadata we’ll use for the remainder of the demo.

Arrange Lake Formation permissions

On this subsequent set of steps, we show find out how to safe PHI knowledge to take care of compliance and empower GIS analysts to work successfully. To safe the information lake, we use AWS Lake Formation. As a way to correctly arrange Lake Formation permissions, we have to collect particulars on how entry to the information lake is established.

The Knowledge Catalog supplies metadata and schema info that permits companies to entry knowledge inside the knowledge lake. To entry the information lake from ArcGIS Professional, we use the ArcGIS Professional Redshift connector, which permits a connection from ArcGIS Professional to Amazon Redshift. Amazon Redshift can entry the Knowledge Catalog and supply connectivity to the information lake. The CloudFormation template created a Redshift Serverless occasion and namespace and an IAM function that we’ll use to configure this connection. We nonetheless must arrange Lake Formation permissions in order that GIS analysts can solely entry publicly accessible fields and never these containing PHI or PII. We are going to assign a Lake Formation tag on the columns containing the publicly accessible info and assign permissions to the GIS analysts to permit entry to columns with this tag.

By default, the Lake Formation configuration permits Tremendous entry to IAMAllowedPrinciples; that is to take care of backward compatibility as detailed in Altering the default settings in your knowledge lake. To show a safer configuration, we’ll take away this default configuration.

On the Lake Formation console, select Administration within the navigation pane.

Within the Knowledge Catalog settings part, be sure Use solely IAM entry management for brand spanking new databases and Use solely IAM entry management for brand spanking new tables in new databases are unchecked.

Within the navigation pane, below Permissions, select Knowledge permissions.

Choose IAMAllowedPrincipals and select Revoke.

Select Tables within the navigation pane.

Open the desk clinic-sample-s3_ACCOUNT_ID and select Edit schema.

Choose the fields starting with clinic_ and select Edit LF-Tags.

The CloudFormation stack created a Lake Formation tag named geoproperty. Assign geoproperty as the important thing and true for the worth on all of the clinic_ fields, then select Save.

Subsequent, we have to grant the Amazon Redshift IAM function permission to entry fields tagged with geoproperty = true.

Select Knowledge lake permissions, then select Grant.

For the IAM function, select demo-RedshiftIAMRole-UNIQUE_ID.

Choose geoproperty for the important thing and true for the worth.

Underneath Database permissions, choose Describe, and below Desk permissions, choose Choose and Describe.

Configure the Amazon Redshift Question Editor v2

Subsequent, we have to carry out the preliminary configuration of Amazon Redshift required for database operations. We use an AWS Secrets and techniques Supervisor secret created by the template to verify password entry is managed securely in accordance with AWS finest practices.

On the Amazon Redshift console, select Question editor v2.

If you first begin Amazon Redshift, a one-time configuration for the account seems. For this submit, depart the choices default and select Configure account.

For extra details about these choices, consult with Configuring your AWS account.

The question editor would require credentials to connect with the serverless occasion; these have been created by the template and saved in Secrets and techniques Supervisor.

Choose Different methods to attach, then choose AWS Secrets and techniques Supervisor.

For Secret, choose (Redshift-admin-credentials).

Select Save.

Arrange schemas in Amazon Redshift

An exterior schema in Amazon Redshift is a function used to reference schemas that exist in exterior knowledge sources. For info on creating exterior schemas, see Exterior schemas in Amazon Redshift Spectrum. We use an exterior schema to offer entry to the information lake in Amazon Redshift. From ArcGIS Professional, we’ll hook up with Amazon Redshift to entry the geospatial knowledge.

The IAM function used within the creation of the exterior schema must be related to the Redshift namespace. This has already been arrange by the CloudFormation template, however it’s apply to confirm that the function is ready up accurately earlier than continuing.

On the Redshift Serverless console, select Namespace configuration within the navigation pane.

Select the namespace (sample-rs-namespace).

On the Safety and encryption tab, you must see the IAM function created by CloudFormation. If this function or the namespace isn’t current, confirm the stack in AWS CloudFormation earlier than continuing.

Copy the ARN of the function to be used in a later step.

Select Question knowledge to return to the question editor.

Within the question editor, enter the next SQL command; make sure you substitute the instance function ARN with your personal. This SQL command will create an exterior schema that makes use of the identical Redshift function related to our namespace to connect to the AWS Glue database.

CREATE EXTERNAL SCHEMA samp_clinic_sch_ext FROM DATA CATALOG

database ‘sample-glue-database’

IAM_ROLE ‘arn:aws:iam::{ACCOUNT_ID}:function/demo-RedshiftIAMRole-{UNIQUE_ID}’;

Within the question editor, carry out a choose question on sample-glue-database:

SELECT * FROM “dev”.”samp_clinic_sch_ext”.”clinic-sample_s3_{ACCOUNT_ID}”;

As a result of the related function has been granted entry to columns tagged with geoproperty = true, solely these fields shall be returned, as proven within the following screenshot (the information on this instance is fictionalized).

Use the next command to create a neighborhood schema in Amazon Redshift. The exterior schema can’t be up to date; we’ll use this native schema so as to add a geometry discipline with a Redshift operate.

CREATE SCHEMA samp_clinic_sch_local

Create a view in Amazon Redshift

For the information to be viewable from ArcGIS Professional, we might want to create a view. Now that the schemas have been established, we are able to create the view that may be accessed from ArcGIS Professional.

Amazon Redshift supplies many geospatial features that can be utilized to create views with fields utilized by ArcGIS Professional so as to add factors onto a map. We are going to use certainly one of these features as a result of the dataset accommodates latitude and longitude.

Use the next SQL code within the Amazon Redshift Question Editor to create a brand new view named clinic_location_view. Substitute {ACCOUNT_ID} with your personal account ID.

CREATE

OR REPLACE VIEW “samp_clinic_sch_local”.”clinic_location_view” AS

SELECT

clinic_id as id,

clinic_lat as lat,

clinic_long as lengthy,

ST_MAKEPOINT(lengthy, lat) as geom

FROM

“dev”.”samp_clinic_sch_ext”.”clinic-sample_s3_{ACCOUNT_ID}”

WITH NO SCHEMA BINDING;

The brand new view that’s created below your native schema may have a column named geom containing map-based factors that can be utilized by ArcGIS Professional so as to add factors throughout map creation. The factors on this instance are for the clinics offering vaccines. In a real-world state of affairs, as new clinics are constructed and their knowledge is added to the information lake, their places could be added to the map created utilizing this knowledge.

Create a neighborhood database consumer for ArcGIS Professional

For this demo, we use a database consumer and group to offer entry for ArcGIS Professional purchasers. Enter the next SQL code into the Amazon Redshift Question Editor to create a database consumer and group:

CREATE USER dbuser with PASSWORD ‘SET_PASSWORD_HERE’;

CREATE GROUP esri_developer_group;

ALTER GROUP esri_developer_group ADD USER dbuser;

After the instructions are full, use the next code to grant permissions to the group:

GRANT USAGE ON SCHEMA samp_clinic_sch_local TO GROUP esri_developer_group;

ALTER DEFAULT PRIVILEGES IN SCHEMA samp_clinic_sch_local GRANT SELECT ON TABLES TO GROUP esri_developer_group;

GRANT SELECT ON ALL TABLES IN SCHEMA samp_clinic_sch_local TO GROUP esri_developer_group;

Join ArcGIS Professional to the Redshift database

As a way to add the database connection to ArcGIS Professional, you want the endpoint for the Redshift Serverless workgroup. You may entry the endpoint info on the sample-rs-wg workgroup particulars web page on the Redshift Serverless console. The Redshift namespaces and workgroups are listed by default, as proven within the following screenshot.

You may copy the endpoint within the Common info part. This endpoint might want to modified; the :5439/dev will should be eliminated when configuring the connector in ArcGIS Professional.

Open ArcGIS Professional with the venture file you need to add the Redshift connection to.

On the menu, select Insert after which Connections, Database, and New Database Connection.

For Database Platform, select Amazon Redshift.

For Server, insert the endpoint you copied (take away every little thing following .com from the endpoint).

For Database, select your database.

In case your ArcGIS Professional consumer doesn’t have entry to the endpoint, you’ll obtain an error throughout this step. A community path should exist between the ArcGIS Professional consumer and the Redshift Serverless endpoint. You may arrange the community path with Direct Join, AWS Website-to-Website VPN, or AWS Shopper VPN. Though it’s not advisable for safety causes, it’s also possible to configure Amazon Redshift with a publicly accessible endpoint. Ensure you seek the advice of your safety and community groups for finest practices and coverage steerage earlier than permitting public entry to your Redshift Serverless occasion.

If a community path exists and also you’re having points connecting, confirm the safety group guidelines enable communication inbound out of your ArcGIS Professional subnet over the port your Redshift Serverless occasion is operating on. The default port is 5439, however you possibly can configure a variety of ports relying in your surroundings; see Connecting to Amazon Redshift Serverless for extra info.

If connectivity is profitable, ArcGIS Professional will add the Amazon Redshift connection below Connection File Identify.

Select OK.

Select the connection to show the view that was created to incorporate geometry (clinic_location_view).

Select (right-click) the view and select Add To Present Map.

ArcGIS Professional will add the factors from the view onto the map. The ultimate map displayed has the symbology edited to make use of crimson crosses to characterize the clinics as an alternative of dots.

Clear up

After you could have completed the demo, full the next steps to wash up your sources:

On the Amazon S3 console, open the bucket created by the CloudFormation stack and delete the data-with-geocode.csv file.

On the AWS CloudFormation console, delete the demo stack to take away the sources it created.

Conclusion

On this submit, we reviewed find out how to arrange Redshift Serverless to make use of geospatial knowledge contained inside a knowledge lake to reinforce maps in ArcGIS Professional. This system helps builders and GIS analysts use accessible datasets in knowledge lakes and remodel it in Amazon Redshift to additional enrich the information earlier than presenting it on a map. We additionally confirmed find out how to safe a knowledge lake utilizing Lake Formation, crawl a geospatial dataset with AWS Glue, and visualize the information in ArcGIS Professional.

For added finest practices for storing geospatial knowledge in Amazon S3 and querying it with Amazon Redshift, see Tips on how to partition your geospatial knowledge lake for evaluation with Amazon Redshift. We invite you to depart suggestions within the feedback part.

Concerning the authors

Jeremy Spell is a Cloud Infrastructure Architect working with Amazon Internet Providers (AWS) Skilled Providers. He enjoys architecting and constructing options for patrons. In his free time Jeremy makes Texas model BBQ, and spends time together with his household and church neighborhood.

Jeremy Spell is a Cloud Infrastructure Architect working with Amazon Internet Providers (AWS) Skilled Providers. He enjoys architecting and constructing options for patrons. In his free time Jeremy makes Texas model BBQ, and spends time together with his household and church neighborhood.

Jeff Demuth is a options architect who joined Amazon Internet Providers (AWS) in 2016. He focuses on the geospatial neighborhood and is obsessed with geographic info programs (GIS) and know-how. Outdoors of labor, Jeff enjoys touring, constructing Web of Issues (IoT) purposes, and tinkering with the most recent devices.

Jeff Demuth is a options architect who joined Amazon Internet Providers (AWS) in 2016. He focuses on the geospatial neighborhood and is obsessed with geographic info programs (GIS) and know-how. Outdoors of labor, Jeff enjoys touring, constructing Web of Issues (IoT) purposes, and tinkering with the most recent devices.