Need smarter insights in your inbox? Join our weekly newsletters to get solely what issues to enterprise AI, information, and safety leaders. Subscribe Now

Researchers on the College of Illinois Urbana-Champaign and the College of Virginia have developed a brand new mannequin structure that would result in extra strong AI programs with extra highly effective reasoning capabilities.

Known as an energy-based transformer (EBT), the structure reveals a pure means to make use of inference-time scaling to resolve advanced issues. For the enterprise, this might translate into cost-effective AI functions that may generalize to novel conditions with out the necessity for specialised fine-tuned fashions.

The problem of System 2 pondering

In psychology, human thought is commonly divided into two modes: System 1, which is quick and intuitive, and System 2, which is gradual, deliberate and analytical. Present massive language fashions (LLMs) excel at System 1-style duties, however the AI business is more and more centered on enabling System 2 pondering to deal with extra advanced reasoning challenges.

Reasoning fashions use numerous inference-time scaling methods to enhance their efficiency on tough issues. One common methodology is reinforcement studying (RL), utilized in fashions like DeepSeek-R1 and OpenAI’s “o-series” fashions, the place the AI is rewarded for producing reasoning tokens till it reaches the right reply. One other strategy, typically known as best-of-n, includes producing a number of potential solutions and utilizing a verification mechanism to pick out the perfect one.

Nonetheless, these strategies have important drawbacks. They’re typically restricted to a slim vary of simply verifiable issues, like math and coding, and may degrade efficiency on different duties resembling inventive writing. Moreover, current proof means that RL-based approaches won’t be instructing fashions new reasoning abilities, as a substitute simply making them extra doubtless to make use of profitable reasoning patterns they already know. This limits their means to resolve issues that require true exploration and are past their coaching regime.

Vitality-based fashions (EBM)

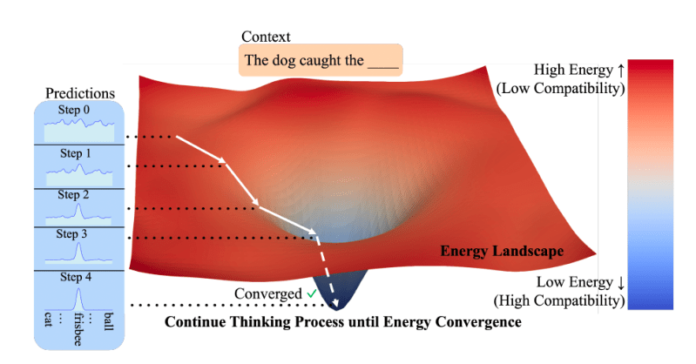

The structure proposes a distinct strategy based mostly on a category of fashions referred to as energy-based fashions (EBMs). The core concept is easy: As an alternative of immediately producing a solution, the mannequin learns an “power operate” that acts as a verifier. This operate takes an enter (like a immediate) and a candidate prediction and assigns a price, or “power,” to it. A low power rating signifies excessive compatibility, that means the prediction is an effective match for the enter, whereas a excessive power rating signifies a poor match.

Making use of this to AI reasoning, the researchers suggest in a paper that devs ought to view “pondering as an optimization process with respect to a realized verifier, which evaluates the compatibility (unnormalized likelihood) between an enter and candidate prediction.” The method begins with a random prediction, which is then progressively refined by minimizing its power rating and exploring the area of doable options till it converges on a extremely suitable reply. This strategy is constructed on the precept that verifying an answer is commonly a lot simpler than producing one from scratch.

This “verifier-centric” design addresses three key challenges in AI reasoning. First, it permits for dynamic compute allocation, that means fashions can “assume” for longer on more durable issues and shorter on straightforward issues. Second, EBMs can naturally deal with the uncertainty of real-world issues the place there isn’t one clear reply. Third, they act as their very own verifiers, eliminating the necessity for exterior fashions.

Not like different programs that use separate turbines and verifiers, EBMs mix each right into a single, unified mannequin. A key benefit of this association is best generalization. As a result of verifying an answer on new, out-of-distribution (OOD) information is commonly simpler than producing an accurate reply, EBMs can higher deal with unfamiliar eventualities.

Regardless of their promise, EBMs have traditionally struggled with scalability. To unravel this, the researchers introduce EBTs, that are specialised transformer fashions designed for this paradigm. EBTs are educated to first confirm the compatibility between a context and a prediction, then refine predictions till they discover the lowest-energy (most suitable) output. This course of successfully simulates a pondering course of for each prediction. The researchers developed two EBT variants: A decoder-only mannequin impressed by the GPT structure, and a bidirectional mannequin just like BERT.

Vitality-based transformer (supply: GitHub)

Vitality-based transformer (supply: GitHub)

The structure of EBTs make them versatile and suitable with numerous inference-time scaling methods. “EBTs can generate longer CoTs, self-verify, do best-of-N (or) you’ll be able to pattern from many EBTs,” Alexi Gladstone, a PhD pupil in laptop science on the College of Illinois Urbana-Champaign and lead creator of the paper, instructed VentureBeat. “The perfect half is, all of those capabilities are realized throughout pretraining.”

EBTs in motion

The researchers in contrast EBTs in opposition to established architectures: the favored transformer++ recipe for textual content technology (discrete modalities) and the diffusion transformer (DiT) for duties like video prediction and picture denoising (steady modalities). They evaluated the fashions on two principal standards: “Studying scalability,” or how effectively they prepare, and “pondering scalability,” which measures how efficiency improves with extra computation at inference time.

Throughout pretraining, EBTs demonstrated superior effectivity, attaining an as much as 35% greater scaling fee than Transformer++ throughout information, batch dimension, parameters and compute. This implies EBTs may be educated sooner and extra cheaply.

At inference, EBTs additionally outperformed present fashions on reasoning duties. By “pondering longer” (utilizing extra optimization steps) and performing “self-verification” (producing a number of candidates and selecting the one with the bottom power), EBTs improved language modeling efficiency by 29% greater than Transformer++. “This aligns with our claims that as a result of conventional feed-forward transformers can not dynamically allocate further computation for every prediction being made, they’re unable to enhance efficiency for every token by pondering for longer,” the researchers write.

For picture denoising, EBTs achieved higher outcomes than DiTs whereas utilizing 99% fewer ahead passes.

Crucially, the research discovered that EBTs generalize higher than the opposite architectures. Even with the identical or worse pretraining efficiency, EBTs outperformed present fashions on downstream duties. The efficiency positive factors from System 2 pondering had been most substantial on information that was additional out-of-distribution (totally different from the coaching information), suggesting that EBTs are notably strong when confronted with novel and difficult duties.

The researchers recommend that “the advantages of EBTs’ pondering will not be uniform throughout all information however scale positively with the magnitude of distributional shifts, highlighting pondering as a important mechanism for strong generalization past coaching distributions.”

The advantages of EBTs are essential for 2 causes. First, they recommend that on the large scale of as we speak’s basis fashions, EBTs may considerably outperform the basic transformer structure utilized in LLMs. The authors be aware that “on the scale of recent basis fashions educated on 1,000X extra information with fashions 1,000X bigger, we anticipate the pretraining efficiency of EBTs to be considerably higher than that of the Transformer++ recipe.”

Second, EBTs present a lot better information effectivity. This can be a important benefit in an period the place high-quality coaching information is turning into a significant bottleneck for scaling AI. “As information has turn out to be one of many main limiting elements in additional scaling, this makes EBTs particularly interesting,” the paper concludes.

Regardless of its totally different inference mechanism, the EBT structure is very suitable with the transformer, making it doable to make use of them as a drop-in alternative for present LLMs.

“EBTs are very suitable with present {hardware}/inference frameworks,” Gladstone mentioned, together with speculative decoding utilizing feed-forward fashions on each GPUs or TPUs. He mentioned he’s additionally assured they will run on specialised accelerators resembling LPUs and optimization algorithms resembling FlashAttention-3, or may be deployed by frequent inference frameworks like vLLM.

For builders and enterprises, the sturdy reasoning and generalization capabilities of EBTs may make them a strong and dependable basis for constructing the subsequent technology of AI functions. “Pondering longer can broadly assistance on nearly all enterprise functions, however I believe probably the most thrilling shall be these requiring extra essential choices, security or functions with restricted information,” Gladstone mentioned.

Day by day insights on enterprise use circumstances with VB Day by day

If you wish to impress your boss, VB Day by day has you lined. We provide the inside scoop on what corporations are doing with generative AI, from regulatory shifts to sensible deployments, so you’ll be able to share insights for optimum ROI.

Thanks for subscribing. Try extra VB newsletters right here.

An error occured.