As organizations scale their Amazon Net Providers (AWS) infrastructure, they often encounter challenges in orchestrating information and analytics workloads throughout a number of AWS accounts and AWS Areas. Whereas multi-account technique is crucial for organizational separation and governance, it creates complexity in sustaining safe information pipelines and managing fine-grained permissions notably when totally different groups handle sources in separate accounts.

Amazon Managed Workflows for Apache Airflow (Amazon MWAA) is a managed orchestration service for Apache Airflow that you need to use to arrange and function information pipelines within the Amazon Cloud at scale. Apache Airflow is an open supply device used to programmatically creator, schedule, and monitor sequences of processes and duties, known as workflows. With Amazon MWAA, you need to use Apache Airflow to create workflows with out having to handle the underlying infrastructure for scalability, availability, and safety.

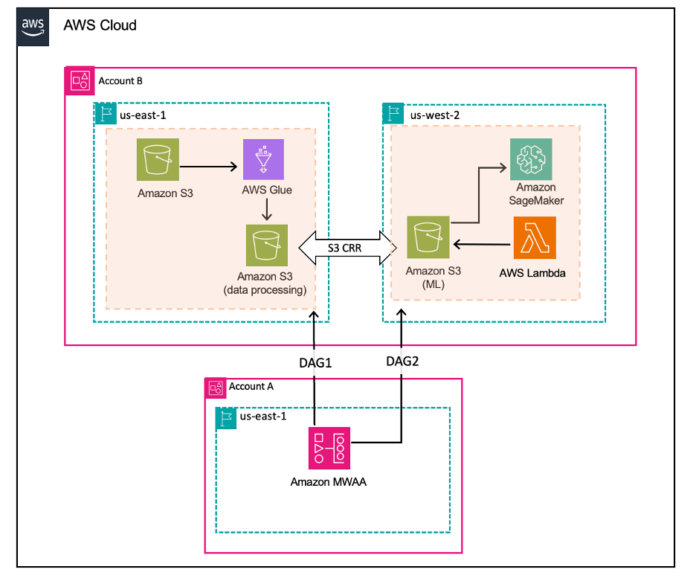

On this weblog put up, we display easy methods to use Amazon MWAA for centralized orchestration, whereas distributing information processing and machine studying duties throughout totally different AWS accounts and Areas for optimum efficiency and compliance.

Answer overview

Let’s take into account an instance of a worldwide enterprise with distributed groups unfold throughout totally different AWS areas. Every group generates and processes precious information that’s usually required by different groups for complete insights and streamlined operations. On this put up, we take into account a state of affairs the place the info processing group sits in a single area and the machine studying (ML) group sits in one other area and there’s a central group that manages the duties between the 2 groups.

To handle this advanced problem of orchestrating dependent groups throughout geographic areas, we’ve designed an information pipeline that spans a number of AWS accounts throughout totally different AWS Areas and is centrally orchestrated utilizing Amazon MWAA. This design permits seamless information movement between groups, ensuring that every group has entry to the required information from different AWS accounts and Areas whereas sustaining compliance and operational effectivity.

Right here’s a high-level overview of the structure:

Centralized orchestration hub (Account A, us-east-1)

Amazon MWAA serves because the central orchestrator, coordinating operations throughout all regional information pipelines.

Regional information pipelines (Account B, two Areas)

Area 1 (for instance, us-east-1)

Area 2 (for instance, us-west-2)

This structure maintains the idea of separate regional operations inside Account B, with information processing in AWS Area 1 and ML in AWS Area 2. The central Amazon MWAA occasion in Account A orchestrates these operations throughout AWS Areas, enabling totally different groups to work with the info they want. It permits scalability, automation, and streamlined information processing and ML workflows throughout a number of AWS environments.

Conditions

This answer requires two AWS accounts:

Account A: Central managed account for the Amazon MWAA atmosphere.

Account B: Information processing and ML operations

Major Area: US East (N. Virginia) (us-east-1): Information processing workloads

Secondary Area: US West (Oregon) (us-west-2): ML workloads

Step 1: Arrange Account B (information processing and ML duties)

![]() in us-east-1 and supply Account A as enter. This template creates the next three stacks:

in us-east-1 and supply Account A as enter. This template creates the next three stacks:

Stack in us-east-1: Creates the required roles for stackset execution.

Second stack in us-east-1: Creates an S3 bucket, S3 folders, and AWS Glue job.

Stack in us-west-2: Creates a S3 bucket, S3 folders, Amazon SageMaker Config file, cross-account-role, and AWS Lambda perform.

Acquire stack outputs: After profitable deployment, collect the next output values from the created stacks. These outputs will likely be utilized in subsequent steps of the setup course of.

From the us-east-1 stack:

The worth of SourceBucketName

From the us-west-2 stack:

The worth of DestinationBucketName

The worth of CrossAccountRoleArn

Step 2: Arrange Account A (central orchestration)

![]() in us-east-1. Present worth of CrossAccountRoleArn from Account B setup as enter. This template does the next:

in us-east-1. Present worth of CrossAccountRoleArn from Account B setup as enter. This template does the next:

Deploys an Amazon MWAA atmosphere

Units up an Amazon MWAA Execution position with a cross-account belief coverage.

Step 3: Organising S3 CRR and bucket insurance policies in Account B

![]() in us-east-1 for cross-Area replication of the S3 data-processing bucket in us-east-1 and the ML pipeline bucket in us-west-1. Present values of SourceBucketName, DestinationBucketName, and AccountAId as enter parameters.

in us-east-1 for cross-Area replication of the S3 data-processing bucket in us-east-1 and the ML pipeline bucket in us-west-1. Present values of SourceBucketName, DestinationBucketName, and AccountAId as enter parameters.

This stack must be deployed after finishing the Amazon MWAA setup. This sequence is important as a result of it is advisable grant the Amazon MWAA execution position applicable permissions to entry each the supply and vacation spot buckets.

Step 4: Implement cross-account, cross-Area orchestration

IAM cross-account position in Account B

The stack in Step 2 created an AWS Id and Entry Administration (IAM) position in Account B with a belief relationship that enables the Amazon MWAA execution position from Account A (the central orchestration account) to imagine it. Moreover, this position is granted the required permissions to entry AWS sources in each Areas of Account B.

This setup permits the Amazon MWAA atmosphere in Account A to securely carry out actions and entry sources throughout totally different Areas in Account B, sustaining the precept of least privilege whereas permitting for versatile, cross-account orchestration.

Airflow connection in Account A

To ascertain cross-account connections in Amazon MWAA:

Create a connection for us-east-1. Open the Airflow UI and navigate to Admin after which to Connections. Select the plus (+) icon so as to add a brand new connection and enter the next particulars:

Connection ID: Enter aws_crossaccount_role_conn_east1

Connection kind: Choose Amazon Net Providers.

Extras: Add the cross-account-role and Area identify utilizing the next code. Change with the cross-account position Amazon Useful resource Title (ARN) created whereas setting Account B in Step 1, in Area 2 (us-west-2):

{

“role_arn”: “”,

“region_name”: “us-east-1”

}

Create a second connection for us-west-2.

Connection ID: Enter aws_crossaccount_role_conn_west2

Connecton kind: Choose Amazon Net Providers.

Extras: Add a CrossAccountRoleArn and Area identify utilizing the next code:

{

“role_arn”: “”,

“region_name”: “us-west-2”

}

By establishing these Airflow connections, Amazon MWAA can securely entry sources in each us-east-1 and us-west-2, serving to to make sure seamless workflow execution.

Implement cross-account workflows in Account A

Now that your atmosphere is about up with the required IAM roles and Airflow connections, you’ll be able to create information processing and ML workflows that span throughout accounts and Areas.

DAG 1: Cross-account information processing

The directed acyclic graph (DAG) depicted within the previous determine demonstrates a cross-account information processing workflow utilizing Amazon MWAA and AWS companies.

To implement this DAG:

Right here’s an outline of its key operators:

S3KeySensor: This sensor screens a specified S3 bucket for the presence of a uncooked information file (uncooked/ml_train_data.csv). It makes use of a cross-account AWS connection (aws_crossaccount_role_conn_east1) to entry the S3 bucket in a distinct AWS account. The sensor checks each 60 seconds and occasions out after 1 hour if the file just isn’t detected.

GlueJobOperator: This operator triggers an AWS Glue job (mwaa_glue_raw_to_transform) for information preprocessing. It passes the bucket identify as a script argument to the AWS Glue job. Just like the S3KeySensor, it makes use of the cross-account AWS connection to execute the AWS Glue job within the goal account.

DAG 2: Cross-account and cross-Area ML

The DAG within the previous determine demonstrates a cross-account machine studying workflow utilizing Amazon MWAA and AWS companies. It exhibits Airflow’s flexibility in enabling customers to write down customized operators for particular use instances, notably for cross-account operations.

To implement this DAG:

Right here’s an outline of the customized operators and key elements:

CrossAccountSageMakerHook: This practice hook extends the SageMakerHook to allow cross-account entry. It makes use of AWS Safety Token Service (AWS STS) to imagine a job within the goal account, enabling seamless interplay with SageMaker throughout account boundaries.

CrossAccountSageMakerTrainingOperator: Constructing on the CrossAccountSageMakerHook, this operator permits SageMaker coaching jobs to be executed in a distinct AWS account. It overrides the default SageMakerTrainingOperator to make use of the cross-account hook.

S3KeySensor: Used to watch the presence of coaching information in a specified S3 bucket. These sensors confirm that the required information is offered earlier than continuing with the machine studying workflow. It makes use of a cross-account AWS connection (aws_crossaccount_role_conn_west2) to entry the S3 bucket in a distinct AWS account.

SageMakerTrainingOperator: Makes use of the customized CrossAccountSageMakerTrainingOperator to provoke a SageMaker coaching job within the goal account. The configuration for this job is dynamically loaded from an S3 bucket.

LambdaInvokeFunctionOperator: Invokes a Lambda perform named dagcleanup after the SageMaker coaching job completes. This can be utilized for post-processing or cleanup duties.

Step 5: Schedule and confirm the Airflow DAGs

To schedule the DAGs, copy the Python scripts cross_account_data_processing_dag.py and cross_account_machine_learning_dag.py to the S3 location related to Amazon MWAA in central Account A. Go to the Airflow atmosphere created in Account A, us-east-1, and find the S3 bucket hyperlink and add them to the dags folder.

Obtain information file to the supply bucket created in Account B, us-east-1, beneath uncooked folder.

Navigate to the Airflow UI.

Find your DAG within the DAGs tab. The DAG mechanically syncs from Amazon S3 to the Airflow UI. Select the toggle button to allow the DAGs.

Set off the DAG runs.

Finest practices for cross-account integration

When implementing cross-account, cross-Area workflows with Amazon MWAA, take into account the next greatest practices to assist guarantee safety, effectivity, and maintainability.

Secrets and techniques administration: Use AWS Secrets and techniques Supervisor to securely retailer and handle delicate data equivalent to database credentials, API keys, or cross-account position ARNs. Rotate secrets and techniques often utilizing Secrets and techniques Supervisor computerized rotation. For extra data, see Utilizing a secret key in AWS Secrets and techniques Supervisor for an Apache Airflow connection.

Networking: Select the suitable networking answer (AWS Transit Gateway, VPC Peering, AWS PrivateLink) based mostly in your particular necessities, contemplating elements such because the variety of VPCs, safety wants, and scalability necessities. Implement applicable safety teams and community ACLs to manage visitors movement between related networks.

IAM position administration: Observe the precept of least privilege when creating IAM roles for cross-account entry.

Error dealing with and retries: Implement strong error dealing with in your DAGs to handle cross-account entry points. Use Airflow’s retry mechanisms to deal with transient failures in cross-account operations.

Managing Python dependencies: Use a necessities.txt file to specify precise variations of required packages. Take a look at your dependencies domestically utilizing the Amazon MWAA native runner earlier than deploying to manufacturing. For extra data, see Amazon MWAA greatest practices for managing Python dependencies

Clear up

To keep away from future prices, take away any sources you created for this answer.

Empty the S3 buckets: Manually delete all objects inside every bucket, confirm they’re empty, then delete the buckets themselves.

Delete the CloudFormation stacks: Establish and delete the stacks related to the structure.

Confirm useful resource cleanup: Guarantee that Amazon MWAA, AWS Glue, SageMaker, Lambda, and different companies are terminated.

Take away remaining sources: Delete any manually created IAM roles, insurance policies, or safety teams.

Conclusion

By utilizing Airflow connections, customized operators, and options equivalent to Amazon S3 cross-Area replication, you’ll be able to create a classy workflow that seamlessly operates throughout a number of AWS accounts and Areas. This method permits for advanced, distributed information processing and machine studying pipelines that may make the most of sources unfold throughout your total AWS infrastructure. The mixture of cross-account entry, cross-Area replication, and customized operators supplies a strong toolkit for constructing scalable and versatile information workflows. As at all times, cautious planning and adherence to safety greatest practices are essential when implementing these superior multi-account, multi-Area architectures.

Able to sort out your personal cross-account orchestration challenges? Take a look at this method and share your expertise within the feedback part.

In regards to the authors

Suba Palanisamy is a Senior Technical Account Supervisor serving to clients obtain operational excellence utilizing AWS. Suba is captivated with all issues information and analytics. She enjoys touring together with her household and enjoying board video games

Suba Palanisamy is a Senior Technical Account Supervisor serving to clients obtain operational excellence utilizing AWS. Suba is captivated with all issues information and analytics. She enjoys touring together with her household and enjoying board video games

Anubhav Gupta is a Options Architect at AWS supporting enterprise greenfield clients, specializing in the monetary companies trade. He has labored with lots of of shoppers worldwide constructing their cloud foundational environments and platforms, architecting new workloads, and creating governance technique for his or her cloud environments. In his free time, he enjoys touring and spending time open air

Anubhav Gupta is a Options Architect at AWS supporting enterprise greenfield clients, specializing in the monetary companies trade. He has labored with lots of of shoppers worldwide constructing their cloud foundational environments and platforms, architecting new workloads, and creating governance technique for his or her cloud environments. In his free time, he enjoys touring and spending time open air

Anusha Pininti is a Options Architect guiding enterprise greenfield clients by each stage of their cloud transformation, specializing in information analytics. She helps clients throughout varied industries, serving to them obtain their enterprise targets by cloud-based options. In her free time, Anusha likes to journey, spend time with household, and experiment with new dishes

Anusha Pininti is a Options Architect guiding enterprise greenfield clients by each stage of their cloud transformation, specializing in information analytics. She helps clients throughout varied industries, serving to them obtain their enterprise targets by cloud-based options. In her free time, Anusha likes to journey, spend time with household, and experiment with new dishes

Sriharsh Adari is a Senior Options Architect at AWS, the place he helps clients work backward from enterprise outcomes to develop revolutionary options on AWS. Over time, he has helped a number of clients on information platform transformations throughout trade verticals. His core space of experience consists of expertise technique, information analytics, and information science. In his spare time, he enjoys enjoying sports activities, watching TV exhibits, and enjoying Tabla

Sriharsh Adari is a Senior Options Architect at AWS, the place he helps clients work backward from enterprise outcomes to develop revolutionary options on AWS. Over time, he has helped a number of clients on information platform transformations throughout trade verticals. His core space of experience consists of expertise technique, information analytics, and information science. In his spare time, he enjoys enjoying sports activities, watching TV exhibits, and enjoying Tabla

Geetha Penmatsa is a Options Architect supporting enterprise greenfield clients by their cloud journey. She helps clients throughout varied industries rework their enterprise with the AWS Cloud. She has a background in information analytics and is specializing in Amazon Join Cloud contact middle to assist rework buyer expertise at scale. Outdoors work, Geetha likes to journey, ski, hike, and spend time with family and friends

Geetha Penmatsa is a Options Architect supporting enterprise greenfield clients by their cloud journey. She helps clients throughout varied industries rework their enterprise with the AWS Cloud. She has a background in information analytics and is specializing in Amazon Join Cloud contact middle to assist rework buyer expertise at scale. Outdoors work, Geetha likes to journey, ski, hike, and spend time with family and friends