Azure Databricks is a first-party Microsoft service, natively built-in with the Azure ecosystem to unify knowledge and AI with high-performance analytics and deep tooling assist. This tight integration now features a native Databricks Job exercise in Azure Knowledge Manufacturing facility (ADF), making it simpler than ever to set off Databricks Workflows immediately inside ADF.

This new exercise in ADF is a right away finest follow, and all ADF and Azure Databricks customers ought to contemplate shifting to this sample.

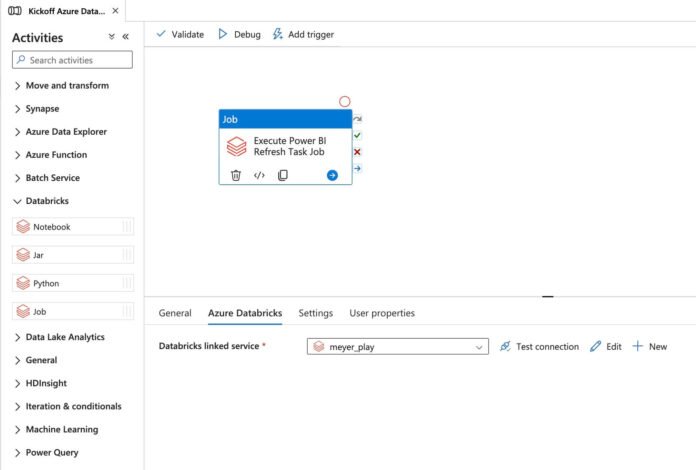

The brand new Databricks Job exercise may be very easy to make use of:

In your ADF pipeline, drag the Databricks Job exercise onto the display screen

On the Azure Databricks tab, choose a Databricks linked service for authentication to the Azure Databricks workspace

You may authenticate utilizing one in all these choices:

a PAT token

the ADF system assigned managed identification, or

a person assigned managed identification

Though the linked service requires you to configure a cluster, this cluster is neither created nor used when executing this exercise. It’s retained for compatibility with different exercise varieties

3. On the settings tab, choose a Databricks Workflow to execute within the Job drop down record (you’ll solely see the Jobs your authenticated principal has entry to). Within the Job Parameters part under, configure Job Parameters (if any) to ship to the Databricks Workflow. To know extra about Databricks Job Parameters, please examine the docs.

Notice that the Job and Job Parameters will be configured with dynamic content material

That’s all there may be to it. ADF will kick off your Databricks Workflow and provides again the Job Run ID and URL. ADF will then ballot for the Job Run to finish. Learn extra under to be taught why this new sample is an immediate basic.

Kicking off Databricks Workflows from ADF enables you to get extra horsepower out of your Azure Databricks funding

Utilizing Azure Knowledge Manufacturing facility and Azure Databricks collectively has been a GA sample since 2018 when it was launched with this weblog publish. Since then, the mixing has been a staple for Azure clients who’ve primarily been following this straightforward sample:

Use ADF to land knowledge into Azure storage by way of its 100+ connectors utilizing a self-hosted integration runtime for personal or on-premise connections

Orchestrate Databricks Notebooks by way of the native Databricks Pocket book exercise to implement scalable knowledge transformation in Databricks utilizing Delta Lake tables in ADLS

Whereas this sample has been extraordinarily priceless over time, it has constrained clients into the next modes of operation, which rob them of the complete worth of Databricks:

Utilizing All Objective compute to run Jobs to forestall cluster launch instances -> run into noisy neighbor issues and paying for All goal compute for automated jobs

Ready for cluster launches per Pocket book execution when utilizing Jobs compute -> basic clusters are spun up per pocket book execution, incurring cluster launch time for every, even for a DAG of notebooks

Managing Swimming pools to scale back Job cluster launch instances -> swimming pools will be exhausting to handle and might typically result in paying for VMs that aren’t being utilized

Utilizing a very permissive permissions sample for integration between ADF and Azure Databricks -> the mixing requires workspace admin OR the create cluster entitlement

No capability to make use of new options in Databricks like Databricks SQL, DLT, or Serverless

Whereas this sample is scalable and native to Azure Knowledge Manufacturing facility and Azure Databricks, the tooling and capabilities it presents have remained the identical since its launch in 2018, despite the fact that Databricks has grown leaps and bounds into the market-leading Knowledge Intelligence Platform throughout all clouds.

Azure Databricks goes past conventional analytics to ship a unified Knowledge Intelligence Platform on Azure. It combines industry-leading Lakehouse structure with built-in AI and superior governance to assist clients unlock insights sooner, at decrease value, and with enterprise-grade safety. Key capabilities embody:

OSS and Open requirements

An {industry} main Lakehouse Catalog by means of Unity Catalog for securing knowledge and AI throughout code, languages, and compute inside and out of doors of Azure Databricks

Finest-in-class efficiency and value efficiency for ETL

Constructed-in capabilities for conventional ML and GenAI, together with fine-tuning LLMs, utilizing foundational fashions (together with Claude Sonnet), constructing Agent purposes, and serving fashions

Finest-in-class DW on the lakehouse with Databricks SQL

Automated publishing and integration with Energy BI by means of the Publish to Energy BI performance present in Unity Catalog and Workflows

With the discharge of the native Databricks Job exercise in Azure Knowledge Manufacturing facility, clients can now execute Databricks Workflows and move parameters to the Jobs Runs. This new sample not solely solves for the constraints highlighted above, nevertheless it additionally permits for the utilization of the next options in Databricks that weren’t beforehand obtainable in ADF like:

Programming a DAG of Duties inside Databricks

Utilizing Databricks SQL integrations

Executing DLT pipelines

Utilizing dbt integration with a SQL Warehouse

Utilizing Traditional Job Cluster reuse to scale back cluster launch instances

Utilizing Serverless Jobs compute

Commonplace Databricks Workflow performance like Run As, Job Values, Conditional Executions like If/Else and For Every, AI/BI Job, Restore Runs, Notifications/Alerts, Git integration, DABs assist, built-in lineage, queuing and concurrent runs, and way more…

Most significantly, clients can now use the ADF Databricks Job exercise to leverage the Publish to Energy BI Duties in Databricks Workflows, which is able to mechanically publish Semantic Fashions to the Energy BI Service from schemas in Unity Catalog and set off an Import if there are tables with storage modes utilizing Import or Twin (arrange directions documentation). A demo on Energy BI Duties in Databricks Workflows will be discovered right here. To enhance this, take a look at the Energy BI on Databricks Finest Practices Cheat Sheet – a concise, actionable information that helps groups configure and optimize their stories for efficiency, value, and person expertise from the beginning.

The Databricks Job exercise in ADF is the New Finest Observe

Utilizing the Databricks Job exercise in Azure Knowledge Manufacturing facility to kick off Databricks Workflows is the brand new finest follow integration when utilizing the 2 instruments. Clients can instantly begin utilizing this sample to benefit from the entire capabilities within the Databricks Knowledge Intelligence Platform. For purchasers utilizing ADF, utilizing the ADF Databricks Job exercise will lead to quick enterprise worth and value financial savings. Clients with ETL frameworks which can be utilizing Pocket book actions ought to migrate their frameworks to make use of Databricks Workflows and the brand new ADF Databricks Job exercise and prioritize this initiative of their roadmap.

Get Began with a Free 14-day Trial of Azure Databricks.