It is a visitor submit coauthored with LaunchDarkly.

The LaunchDarkly characteristic administration platform equips software program groups to proactively cut back the chance of delivery dangerous software program and AI purposes whereas accelerating their launch velocity. On this submit, we discover how LaunchDarkly scaled the interior analytics platform as much as 14,000 duties per day, with minimal enhance in prices, after migrating from one other vendor-managed Apache Airflow answer to AWS, utilizing Amazon Managed Workflows for Apache Airflow (Amazon MWAA) and Amazon Elastic Container Service (Amazon ECS). We stroll you thru the problems we bumped into through the migration, the technical answer we carried out, the trade-offs we made, and classes we discovered alongside the way in which.

The problem

LaunchDarkly has a mission to allow high-velocity groups to launch, monitor, and optimize software program in manufacturing. The centralized knowledge staff is accountable for monitoring how LaunchDarkly is progressing towards that mission. Moreover, this staff is accountable for almost all of the corporate’s inner knowledge wants, which embody ingesting, warehousing, and reporting on the corporate’s knowledge. A few of the giant datasets we handle embody product utilization, buyer engagement, income, and advertising and marketing knowledge.

As the corporate grew, our knowledge quantity elevated, and the complexity and use circumstances of our workloads expanded exponentially. Whereas utilizing different vendor-managed Airflow-based options, our knowledge analytics staff confronted new challenges on time to combine and onboard new AWS providers, knowledge locality, and a non-centralized orchestration and monitoring answer throughout completely different engineering groups inside the group.

Resolution overview

LaunchDarkly has an extended historical past of utilizing AWS providers to resolve enterprise use circumstances, akin to scaling our ingestion from 1 TB to 100 TB per day with Amazon Kinesis Information Streams. Equally, migrating to Amazon MWAA helped us scale and optimize our inner extract, rework, and cargo (ETL) pipelines. We used current monitoring and infrastructure as code (IaC) implementations and ultimately prolonged Amazon MWAA to different groups, establishing it as a centralized batch processing answer orchestrating a number of AWS providers.

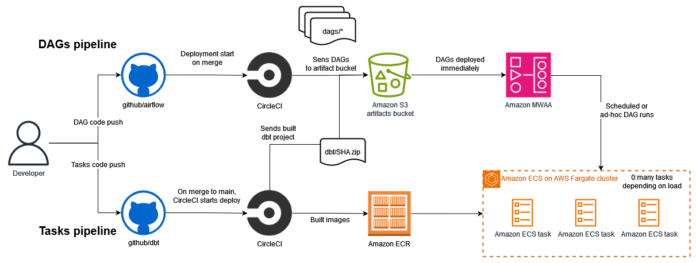

The answer for our transformation jobs embody the next elements:

Our authentic plan for the Amazon MWAA migration was:

Create a brand new Amazon MWAA occasion utilizing Terraform following LaunchDarkly service requirements.

Elevate and shift (or rehost) our code base from Airflow 1.12 to Airflow 2.5.1 on the unique cloud supplier to the identical model on Amazon MWAA.

Minimize over all Directed Acyclic Graph (DAG) runs to AWS.

Improve to Airflow 2.

With the pliability and ease of integration inside AWS ecosystem, iteratively make enhancements round containerization, logging, and steady deployment.

Steps 1 and a pair of have been executed shortly—we used the Terraform AWS supplier and the prevailing LaunchDarkly Terraform infrastructure to construct a reusable Amazon MWAA module initially at Airflow model 1.12. We had an Amazon MWAA occasion and the supporting items (CloudWatch and artifacts S3 bucket) operating on AWS inside per week.

Once we began reducing over DAGs to Amazon MWAA in Step 3, we bumped into some points. On the time of migration, our Airflow code base was centered round a customized operator implementation that created a Python digital setting for our workload necessities on the Airflow employee disk assigned to the duty. By trial and error in our migration try, we discovered that this practice operator was basically depending on the habits and isolation of Airflow’s Kubernetes executors used within the authentic cloud supplier platform. Once we started to run our DAGs concurrently on Amazon MWAA (which makes use of Celery Executor employees that behave in a different way), we bumped into just a few transient points the place the habits of that customized operator may have an effect on different operating DAGs.

At the moment, we took a step again and evaluated options for selling isolation between our operating duties, ultimately touchdown on Fargate for ECS duties that might be began from Amazon MWAA. We had initially deliberate to maneuver our duties to their very own remoted system slightly than having them run instantly in Airflow’s Python runtime setting. As a result of circumstances, we determined to advance this requirement, reworking our rehosting undertaking right into a refactoring migration.

We selected Amazon ECS on Fargate for its ease of use, current Airflow integrations (ECSRUNTAS CEO), low price, and decrease administration overhead in comparison with a Kubernetes-based answer akin to Amazon Elastic Kubernetes Service (Amazon EKS). Though an answer utilizing Amazon EKS would enhance the duty provisioning time even additional, the Amazon ECS answer met the latency necessities of the information analytics staff’s batch pipelines. This was acceptable as a result of these queries run for a number of minutes on a periodic foundation, so a pair extra minutes for spinning up every ECS process didn’t considerably impression general efficiency.

Our first Amazon ECS implementation concerned a single container that downloads our undertaking from an artifacts repository on Amazon S3, and runs the command handed to the ECS process. We set off these duties utilizing the ECSRunTaskOperator in a DAG in Amazon MWAA, and created a wrapper across the built-in Amazon ECS operator, so analysts and engineers on the information analytics staff may create new DAGs simply by specifying the instructions they have been already accustomed to.

The next diagram illustrates the DAG and process deployment flows.

When our preliminary Amazon ECS implementation was full, we have been capable of reduce all of our current DAGs over to Amazon MWAA with out the prior concurrency points, as a result of every process ran in its personal remoted Amazon ECS process on Fargate.

Inside just a few months, we proceeded to Step 4 to improve our Amazon MWAA occasion to Airflow 2. This was a significant model improve (from 1.12 to 2.5.1), which we carried out by following the Amazon MWAA Migration Information and subsequently tearing down our legacy assets.

The fee enhance of including Amazon ECS to our pipelines was minimal. This was as a result of our pipelines run on batch schedules, and due to this fact aren’t energetic always, and Amazon ECS on Fargate solely costs for vCPU and reminiscence assets requested to finish the duties.

As part of Step 5 for steady evaluation and enhancements, we enhanced our Amazon ECS implementation to push logs and metrics to Datadog and CloudWatch. We may monitor for errors and mannequin efficiency, and catch knowledge check failures alongside current LaunchDarkly monitoring.

Scaling the answer past inner analytics

Through the preliminary implementation for the information analytics staff, we created an Amazon MWAA Terraform module, which enabled us to shortly spin up extra Amazon MWAA environments and share our work with different engineering groups. This allowed using Airflow and Amazon MWAA to energy batch pipelines inside the LaunchDarkly product itself in a few months shortly after the information analytics staff accomplished the preliminary migration.

The quite a few AWS service integrations supported by Airflowthe built-in Amazon supplier package deal, and Amazon MWAA allowed us to broaden our utilization throughout groups to make use of Amazon MWAA as a generic orchestrator for distributed pipelines throughout providers like Amazon Athena, Amazon Relational Database Service (Amazon RDS), and AWS Glue. Since adopting the service, onboarding a brand new AWS service to Amazon MWAA has been simple, usually involving the identification of the prevailing Airflow Operator or Hook to make use of, after which connecting the 2 providers with AWS Identification and Entry Administration (IAM).

Classes and outcomes

By way of our journey of orchestrating knowledge pipelines at scale with Amazon MWAA and Amazon ECS, we’ve gained precious insights and classes which have formed the success of our implementation. One of many key classes discovered was the significance of isolation. Through the preliminary migration to Amazon MWAA, we encountered points with our customized Airflow operator that relied on the particular habits of the Kubernetes executors used within the authentic cloud supplier platform. This highlighted the necessity for remoted process execution to keep up the reliability and scalability of our pipelines.

As we scaled our implementation, we additionally acknowledged the significance of monitoring and observability. We enhanced our monitoring and observability by integrating with instruments like Datadog and CloudWatch, so we may higher monitor errors and mannequin efficiency and catch knowledge check failures, bettering the general reliability and transparency of our knowledge pipelines.

With the earlier Airflow implementation, we have been operating roughly 100 Airflow duties per day throughout one staff and two providers (Amazon ECS and Snowflake). As of the time of scripting this submit, we’ve scaled our implementation to 3 groups, 4 providers, and execution of over 14,000 Airflow duties per day. Amazon MWAA has turn into a vital element of our batch processing pipelines, growing the velocity of onboarding new groups, providers, and pipelines to our knowledge platform from weeks to days.

Wanting forward, we plan to proceed iterating on this answer to broaden our use of Amazon MWAA to further AWS providers akin to AWS Lambda and Amazon Easy Queue Service (Amazon SQS), and additional automate our knowledge workflows to assist even higher scalability as our firm grows.

Conclusion

Efficient knowledge orchestration is crucial for organizations to collect and unify knowledge from various sources right into a centralized, usable format for evaluation. By automating this course of throughout groups and providers, companies can rework fragmented knowledge into precious insights to drive higher decision-making. LaunchDarkly has achieved this through the use of managed providers like Amazon MWAA and adopting greatest practices akin to process isolation and observability, enabling the corporate to speed up innovation, mitigate dangers, and shorten the time-to-value of its product choices.

In case your group is planning to modernize its knowledge pipelines orchestration, begin assessing your present workflow administration setup, exploring the capabilities of Amazon MWAA, and contemplating how containerization may gain advantage your workflows. With the appropriate instruments and strategy, you’ll be able to rework your knowledge operations, drive innovation, and keep forward of rising knowledge processing calls for.

In regards to the Authors

Asena Uyar is a Software program Engineer at LaunchDarkly, specializing in constructing impactful experimentation merchandise that empower groups to make higher choices. With a background in arithmetic, industrial engineering, and knowledge science, Asena has been working within the tech trade for over a decade. Her expertise spans numerous sectors, together with SaaS and logistics, and he or she has spent a good portion of her profession as a Information Platform Engineer, designing and managing large-scale knowledge programs. Asena is obsessed with utilizing know-how to simplify and optimize workflows, making an actual distinction in the way in which groups function.

Asena Uyar is a Software program Engineer at LaunchDarkly, specializing in constructing impactful experimentation merchandise that empower groups to make higher choices. With a background in arithmetic, industrial engineering, and knowledge science, Asena has been working within the tech trade for over a decade. Her expertise spans numerous sectors, together with SaaS and logistics, and he or she has spent a good portion of her profession as a Information Platform Engineer, designing and managing large-scale knowledge programs. Asena is obsessed with utilizing know-how to simplify and optimize workflows, making an actual distinction in the way in which groups function.

Dean Verhey is a Information Platform Engineer at LaunchDarkly primarily based in Seattle. He’s labored all throughout knowledge at LaunchDarkly, starting from inner batch reporting stacks to streaming pipelines powering product options like experimentation and flag utilization charts. Previous to LaunchDarkly, he labored in knowledge engineering for a wide range of firms, together with procurement SaaS, journey startups, and hearth/EMS data administration. When he’s not working, you’ll be able to typically discover him within the mountains snowboarding.

Dean Verhey is a Information Platform Engineer at LaunchDarkly primarily based in Seattle. He’s labored all throughout knowledge at LaunchDarkly, starting from inner batch reporting stacks to streaming pipelines powering product options like experimentation and flag utilization charts. Previous to LaunchDarkly, he labored in knowledge engineering for a wide range of firms, together with procurement SaaS, journey startups, and hearth/EMS data administration. When he’s not working, you’ll be able to typically discover him within the mountains snowboarding.

Daniel Lopes is a Options Architect for ISVs at AWS. His focus is on enabling ISVs to design and construct their merchandise in alignment with their enterprise objectives with all benefits AWS providers can present them. His areas of curiosity are event-driven architectures, serverless computing, and generative AI. Exterior work, Daniel mentors his youngsters in video video games and popular culture.

Daniel Lopes is a Options Architect for ISVs at AWS. His focus is on enabling ISVs to design and construct their merchandise in alignment with their enterprise objectives with all benefits AWS providers can present them. His areas of curiosity are event-driven architectures, serverless computing, and generative AI. Exterior work, Daniel mentors his youngsters in video video games and popular culture.