This publish is cowritten with Nikos Tragaras and Raphaël Afanyan from Nexthink.

On this publish, we describe Nexthink’s journey as they applied a brand new real-time alerting system utilizing Amazon Managed Service for Apache Flink. We discover the structure, the rationale behind key know-how decisions, and the Amazon Internet Providers (AWS) companies that enabled a scalable and environment friendly answer.

Nexthink is a pioneering chief in digital worker expertise (DEX). With a mission to empower IT groups and elevate office productiveness, Nexthink’s Infinity platform provides real-time visibility into finish person environments, actionable insights, and strong automation capabilities. By combining real-time analytics, proactive monitoring, and clever automation, Infinity permits organizations to ship an optimum digital workspace.

Up to now 5 years, Nexthink accomplished its transformation right into a fully-fledged cloud platform that processes trillions of occasions per day, reaching over 5 GB per second of aggregated throughput. Internally, Infinity includes greater than 300 microservices that use the ability of Apache Kafka via Amazon Managed Service for Apache Kafka (Amazon MSK) for knowledge ingestion and intra-service communication. The Nexthink ecosystem consists of a number of lots of of Micronaut-based Java microservices deployed in Amazon Elastic Kubernetes Service (Amazon EKS). The overwhelming majority of microservices work together with Kafka via the Kafka Streams framework.

Nexthink alerting system

That can assist you perceive Nexthink’s journey towards a brand new real-time alerting answer, we start by inspecting the present system and the evolving necessities that led them to hunt a brand new answer.

Nexthink’s present alerting system offers close to real-time notifications, serving to customers detect and reply to vital occasions shortly. Whereas efficient, this technique has limitations in scalability, flexibility, and real-time processing capabilities.

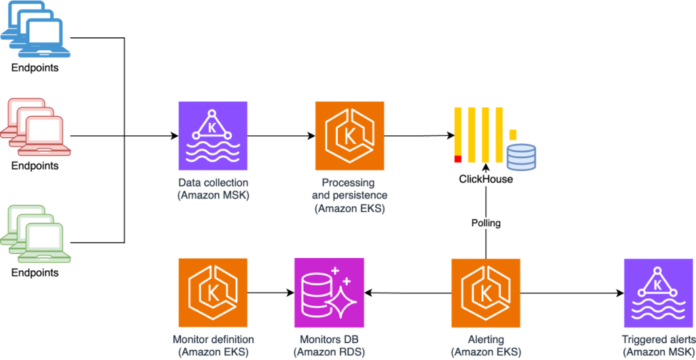

Nexthink gathers telemetry knowledge from hundreds of consumers’ laptops protecting CPU utilization, reminiscence, software program variations, community efficiency, and extra. Amazon MSK and ClickHouse function the spine for this knowledge pipeline. All endpoint knowledge is ingested in Kafka multi-tenant matters, that are processed and eventually saved in a ClickHouse database.

Utilizing the present alerting system, purchasers can outline monitoring guidelines in Nexthink Question Language (NQL), that are evaluated in close to actual time by polling the database each quarter-hour. Alerts are triggered when anomalies are detected in opposition to client-defined thresholds or long-term baselines. This course of is illustrated within the following structure diagram.

Initially, database-polling allowed nice flexibility within the analysis of complicated alerts. Nevertheless, this strategy positioned heavy stress on the database. As the corporate grew and supported bigger prospects with extra endpoints and screens, the database skilled more and more heavy hundreds.

Evolution to a brand new use-case: Actual-time alerts

As Nexthink expanded its knowledge assortment to incorporate digital desktop infrastructure (VDI), the necessity for real-time alerting turned much more vital. Not like conventional endpoints, equivalent to laptops, the place occasions are gathered each 5 minutes, VDI knowledge is ingested each 30 seconds—considerably growing the quantity and frequency of information. The present structure relied on database polling to guage alerts, working at a 15-minute interval. This strategy was insufficient for the brand new VDI use case, the place alerts wanted to be evaluated in close to actual time on messages arriving each 30 seconds. Merely growing the polling frequency wasn’t a viable choice as a result of it might place extreme load on the database, resulting in efficiency bottlenecks and scalability challenges. To fulfill these new calls for effectively, we shifted to real-time alert analysis straight on Kafka matters.

Expertise choices

As we evaluated options for our real-time alerting system, we analyzed two most important know-how choices: Apache Kafka Streams and Apache Flink. Every choice had advantages and limitations that wanted to be thought of.

All Nexthink microservices as much as that time built-in with Kafka utilizing Apache Kafka Streams. We’ve noticed in observe a number of advantages:

Light-weight and seamless integration. No want for extra infrastructure.

Low latency utilizing RocksDB as an area key-value retailer.

Staff experience. Nexthink groups have been writing microservices with Kafka-streams for a very long time and really feel very comfy utilizing it.

In some use instances nevertheless, we discovered that there have been necessary limitations:

Scalability – Scalability was constrained by the tight coupling between parallelism of microservices and the variety of partitions in Kafka matters. Many microservices had already scaled out to match the partition rely of the matters they consumed, limiting their potential to scale additional. One potential answer was growing the partition rely. Nevertheless, this strategy launched important operational overhead, particularly with microservices consuming matters owned by different domains. It required rebalancing all the Kafka cluster and wanted coordination throughout a number of groups. Moreover, such modifications impacted downstream companies, requiring cautious reconfiguration of stateful processing. The choice strategy can be to introduce intermediate matters to redistribute workload, however this might add complexity to the info pipeline and enhance useful resource consumption on Kafka. These challenges made it clear {that a} extra versatile and scalable strategy was wanted.

State administration – Providers that wanted to create giant Okay-tables in reminiscence had an elevated startup time. Additionally, in instances the place the interior state was giant in quantity, we discovered that it utilized important load to the Kafka cluster throughout the creation of the interior state.

Late occasion processing – In windowing operations, late occasions needed to be managed manually with methods that complexified the codebase.

Looking for another that would assist us overcome the challenges posed by our present system, we determined to guage Flink. Its strong streaming capabilities, scalability, and adaptability made it a wonderful selection for constructing real-time alerting programs based mostly on Kafka matters. A number of benefits made Flink significantly interesting:

Native integration with Kafka – Flink provides native connectors for Kafka, which is a central element within the Nexthink ecosystem.

Occasion-time processing and help for late occasions – Flink permits messages to be processed based mostly on the occasion time (that’s, when the occasion really occurred) even when they arrive out of order. This characteristic is essential for real-time alerts as a result of it ensures their accuracy.

Scalability – Flink’s distributed structure permits it to scale horizontally independently from the variety of partitions within the Kafka matters. This characteristic weighed loads in our decision-making as a result of the dependence on the variety of partitions was a robust limitation in our platform up up to now.

Fault tolerance – Flink helps checkpoints, permitting managed state to be endured and guaranteeing constant restoration in case of failures. Not like Kafka Streams, which depends on Kafka itself for long-term state persistence (including additional load to the cluster), Flink’s checkpointing mechanism operates independently and runs out-of-band, minimizing the affect on Kafka whereas offering environment friendly state administration.

Amazon Managed Service for Apache Flink – Amazon Managed Service for Apache Flink is a completely managed service that simplifies the deployment, scaling, and administration of Flink functions for real-time knowledge processing. By eliminating the operational complexities of managing Flink clusters, AWS permits organizations to give attention to constructing and working real-time analytics and event-driven functions effectively. Amazon Managed Service for Apache Flink supplied us with important flexibility. It streamlined our analysis course of, which meant we might shortly arrange a proof-of-concept atmosphere with out moving into the complexities of managing an inside Flink cluster. Furthermore, by lowering the overhead of cluster administration, it made Flink a viable know-how selection and accelerated our supply timeline.

Answer

After cautious analysis of each choices, we selected Apache Flink as our answer as a result of its superior scalability, strong event-time processing, and environment friendly state administration capabilities. Right here’s how we applied our new real-time alerting system.

The next diagram is the answer structure.

The primary use case was to detect points with VDI. Nevertheless, our intention was to construct a generic answer that may give us the choice to onboard sooner or later present use instances at present applied via polling. We wished to take care of a standard approach of configuring monitoring circumstances and permit alert analysis each with polling in addition to in actual time, relying on the kind of gadget being monitored.

This answer includes a number of elements:

Monitor configuration – Utilizing Nexthink Question Language (NQL), the alerts administrator defines a monitor that specifies, for instance:

Knowledge supply – VDI occasions

Time window – Each 30 seconds

Metric – Common community latency, grouped by desktop pool

Set off situation(s) – Latency exceeding 300 ms for a continuing interval of 5 minutes

This monitor configuration is then saved in an internally developed doc retailer and propagated downstream in a Kafka matter.

Knowledge processing utilizing Generic Stream Providers– The Nexthink Collector, an agent put in on endpoints, captures and stories varied sorts of actions from the VDI endpoints the place it’s put in. These occasions are forwarded to Amazon MSK in one in all Nexthink’s manufacturing digital non-public clouds (VPCs) and are consumed by Java microservices working on Amazon EKS belonging to a number of domains inside Nexthink

One among them is Generic Stream Providers, a system that processes the collected occasions and aggregates them in buckets of 30 seconds. This element works as self-service for all of the characteristic groups in Nexthink and may question and mixture knowledge from an NQL question. This manner, we had been in a position to preserve a unified person expertise on monitor configuration utilizing NQL, no matter how alerts had been evaluated. This element is damaged down into two companies:

GS processor – Consumes uncooked VDI session occasions and applies preliminary processing

GS aggregator – Teams and aggregates the info based on the monitor configuration

Actual-time monitoring utilizing Flink – Static threshold alerting and seasonal change detection, which identifies variations in knowledge that comply with a recurring sample over time, are the 2 varieties of detection that we provide for VDI points. The system splits the processing between two functions:

Baseline software – Calculates statistical baselines with seasonality utilizing time-of-day anomaly algorithm. For instance, the latency by VDI shopper location or the CPU queue size of a desktop pool.

Alert software – Generates alerts based mostly on user-defined thresholds when the sudden values don’t change over time or dynamic thresholds based mostly on baselines, which set off when a metric deviates from an anticipated sample.

The next diagram illustrates how we be a part of VDI metrics with monitor configurations, mixture knowledge utilizing sliding time home windows, and consider threshold guidelines, all inside Apache Flink. From this course of, alerts are generated and are then grouped and filtered earlier than being processed additional by the customers of alerts.

Alert processing and notifications – After an alert is triggered (when a threshold is exceeded) or recovered (when a metric returns to regular ranges), the system will assess their affect to prioritize response via the affect processing module. Alerts are then consumed by notification companies that ship messages via emails or webhooks. The alert and affect knowledge are then ingested right into a time sequence database.

Advantages of the brand new structure

One of many key benefits of adopting a streaming-based strategy over polling was its ease of configuration and administration, particularly for a small workforce of three engineers. There was no want for cluster administration, so all we would have liked to do was to provision the service and begin coding.

Given our prior expertise with Kafka and Kafka Streams and mixed with the simplicity of a managed service, we had been in a position to shortly develop and deploy a brand new alerting system with out the overhead of complicated infrastructure setup. We used Amazon Managed Service for Apache Flink to spin up a proof of idea inside just a few hours, which meant the workforce might give attention to defining the enterprise logic with out having issues associated to cluster administration.

Initially, we had been involved concerning the challenges of becoming a member of a number of Kafka matters. With our earlier Kafka Streams implementation, joined matters required an identical partition keys, a constraint generally known as co-partitioning. This created an rigid structure, significantly when integrating matters throughout completely different enterprise domains. Every area naturally had its personal optimum partitioning technique, forcing tough compromises.

Amazon Managed Service for Apache Flink solved this downside via its inside knowledge partitioning capabilities. Though Flink nonetheless incurs some community site visitors when redistributing knowledge throughout the cluster throughout joins, the overhead is virtually negligible. The ensuing structure is each extra scalable (as a result of matters could be scaled independently based mostly on their particular throughput necessities) and simpler to take care of with out complicated partition alignment issues.

This considerably improved our potential to detect and reply to VDI efficiency degradations in actual time whereas conserving our structure clear and environment friendly.

Classes learnt

As with every new know-how, adopting Flink for real-time processing got here with its personal set of challenges and insights.

One of many major difficulties we encountered was observing Flink’s inside state. Not like Kafka Streams, the place the interior state is by default backed by a Kafka matter from which its content material could be visualized, Flink’s structure makes it inherently tough to examine what is going on inside a working job. This required us to put money into strong logging and monitoring methods to raised perceive what is going on throughout the execution and debug points successfully.

One other vital perception emerged round late occasion dealing with—particularly, managing occasions with timestamps that fall inside a time-window’s boundaries however arrive after that window has closed. Amazon Managed Service for Apache Flink addresses this problem via its built-in watermarking mechanism. A watermark is a timestamp-based threshold that signifies when Flink ought to contemplate all occasions earlier than a selected time to have arrived. This permits the system to make knowledgeable selections about when to course of time-based operations like window aggregations. Watermarks move via the streaming pipeline, enabling Flink to trace the progress of occasion time processing even with out-of-order occasions.

Though watermarks present a mechanism to handle late knowledge, they introduce challenges when coping with a number of enter streams working at completely different speeds. Watermarks work nicely when processing occasions from a single supply however can grow to be problematic when becoming a member of streams with various velocities. It’s because they will result in unintended delays or untimely knowledge discards. For instance, a sluggish stream can maintain again processing throughout all the pipeline, and an idle stream would possibly trigger untimely window closing. Our implementation required cautious tuning of watermark methods and allowable lateness parameters to steadiness processing timeliness with knowledge completeness.

Our transition from Kafka Streams to Apache Flink proved smoother than initially anticipated. Groups with Java backgrounds and prior expertise with Kafka Streams discovered Flink’s programming mannequin intuitive and straightforward to make use of. The DataStream API provides acquainted ideas and patterns, and Flink’s extra superior options might be adopted incrementally as wanted. This gradual studying curve gave our builders the pliability to grow to be productive shortly, focusing first on core stream processing duties earlier than shifting on to extra superior ideas like state administration and late occasion processing.

The way forward for Flink in Nexthink

Actual-time alerting is now deployed to manufacturing and obtainable to our purchasers. A significant success of this mission was the truth that we efficiently launched a know-how as a substitute for Kafka streams, with little or no administration necessities, assured scalability, data-management flexibility, and comparable price.

The affect on the Nexthink alerting system was important as a result of we not have a single evaluating alert via database polling. Due to this fact, we’re already assessing the timeframe for onboarding different alerting use instances to real-time analysis with Flink. This can alleviate database load and also will present extra accuracy on the alert triggering.

But the affect of Flink isn’t restricted to the Nexthink alerting system. We now have a confirmed production-ready different for companies which might be restricted by way of scalability as a result of variety of partitions of the matters they’re consuming. Thus, we’re actively evaluating the choice to transform extra companies to Flink to permit them to scale out extra flexibly.

Conclusion

Amazon Managed Service for Apache Flink has been transformative for our real-time alerting system at Nexthink. By dealing with the complicated infrastructure administration, AWS enabled our workforce to deploy a complicated streaming answer in lower than a month, conserving our give attention to delivering enterprise worth moderately than managing Flink clusters.

The capabilities of Flink have confirmed it to be greater than a substitute for Kafka Streams. It’s grow to be a compelling first selection for each new initiatives and present characteristic refactoring. Windowed processing, late occasion administration, and stateful streaming operations have made complicated use instances remarkably easy to implement. As our growth groups proceed to discover Flink’s potential, we’re more and more assured that it’ll play a central function in Nexthink’s real-time knowledge processing structure shifting ahead.

To get began with Amazon Managed Service for Apache Flink, discover the getting began assets and the hands-on workshop. To be taught extra about Nexthink’s broader journey with AWS, go to the weblog publish on Nexthink’s MSK-based structure.

In regards to the authors

Nikos Tragaras is a Principal Software program Architect at Nexthink with round twenty years of expertise in constructing distributed programs, from conventional architectures to fashionable cloud-native platforms. He has labored extensively with streaming applied sciences, specializing in reliability and efficiency at scale. Keen about programming, he enjoys constructing clear options to complicated engineering issues

Nikos Tragaras is a Principal Software program Architect at Nexthink with round twenty years of expertise in constructing distributed programs, from conventional architectures to fashionable cloud-native platforms. He has labored extensively with streaming applied sciences, specializing in reliability and efficiency at scale. Keen about programming, he enjoys constructing clear options to complicated engineering issues

Raphaël Afanyan is a Software program Engineer and Tech Lead of the Alerts workforce at Nexthink. Over time, he has labored on designing and scaling knowledge processing programs and performed a key function in constructing Nexthink’s alerting platform. He now collaborates throughout groups to convey progressive product concepts to life, from backend structure to polished person interfaces.

Raphaël Afanyan is a Software program Engineer and Tech Lead of the Alerts workforce at Nexthink. Over time, he has labored on designing and scaling knowledge processing programs and performed a key function in constructing Nexthink’s alerting platform. He now collaborates throughout groups to convey progressive product concepts to life, from backend structure to polished person interfaces.

Simone Pomata is a Senior Options Architect at AWS. He has labored enthusiastically within the tech business for greater than 10 years. At AWS, he helps prospects reach constructing new applied sciences daily.

Simone Pomata is a Senior Options Architect at AWS. He has labored enthusiastically within the tech business for greater than 10 years. At AWS, he helps prospects reach constructing new applied sciences daily.

Subham Rakshit is a Senior Streaming Options Architect for Analytics at AWS based mostly within the UK. He works with prospects to design and construct streaming architectures to allow them to get worth from analyzing their streaming knowledge. His two little daughters preserve him occupied more often than not outdoors work, and he loves fixing jigsaw puzzles with them. Join with him on LinkedIn.

Subham Rakshit is a Senior Streaming Options Architect for Analytics at AWS based mostly within the UK. He works with prospects to design and construct streaming architectures to allow them to get worth from analyzing their streaming knowledge. His two little daughters preserve him occupied more often than not outdoors work, and he loves fixing jigsaw puzzles with them. Join with him on LinkedIn.

Lorenzo Nicora works as a Senior Streaming Options Architect at AWS, serving to prospects throughout EMEA. He has been constructing cloud-centered, data-intensive programs for over 25 years, working throughout industries each via consultancies and product firms. He has used open supply applied sciences extensively and contributed to a number of initiatives, together with Apache Flink.

Lorenzo Nicora works as a Senior Streaming Options Architect at AWS, serving to prospects throughout EMEA. He has been constructing cloud-centered, data-intensive programs for over 25 years, working throughout industries each via consultancies and product firms. He has used open supply applied sciences extensively and contributed to a number of initiatives, together with Apache Flink.