Amazon Redshift helps querying knowledge saved in Apache Iceberg tables managed by Amazon S3 Tables, which we beforehand lined as a part of getting began weblog submit. Whereas this weblog submit lets you get began utilizing Amazon Redshift with Amazon S3 Tables, there are extra steps it’s worthwhile to take into account when working along with your knowledge in manufacturing environments, together with who has entry to your knowledge and with what degree of permissions.

On this submit, we’ll construct on the primary submit on this collection to point out you methods to arrange an Apache Iceberg knowledge lake catalog utilizing Amazon S3 Tables and supply completely different ranges of entry management to your knowledge. By means of this instance, you’ll arrange fine-grained entry controls for a number of customers and see how this works utilizing Amazon Redshift. We’ll additionally assessment an instance with concurrently utilizing knowledge that resides each in Amazon Redshift and Amazon S3 Tables, enabling a unified analytics expertise.

Answer overview

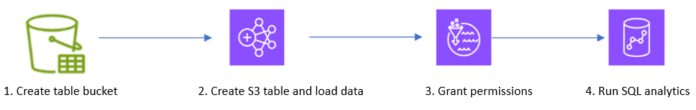

On this resolution, we present methods to question a dataset saved in Amazon S3 Tables for additional evaluation utilizing knowledge managed in Amazon Redshift. Particularly, we undergo the steps proven within the following determine to load a dataset into Amazon S3 Tables, grant acceptable permissions, and eventually execute queries to investigate our dataset for developments and insights.

On this submit, you stroll by way of the next steps:

Creating an Amazon S3 Desk bucket: In AWS Administration Console for Amazon S3, create an Amazon S3 Desk bucket and combine with different AWS analytics providers

Creating an S3 Desk and loading knowledge: Run spark SQL in Amazon EMR to create a namespace and an S3 Desk and cargo diabetic sufferers’ go to knowledge

Granting permissions: Granting fine-grained entry controls in AWS Lake Formation

Operating SQL analytics: Querying S3 Tables utilizing the auto mounted S3 Desk catalog.

This submit makes use of knowledge from a healthcare use case to investigate details about diabetic sufferers and establish the frequency of age teams admitted to the hospital. You’ll use the previous steps to carry out this evaluation.

Stipulations

To start, it’s worthwhile to add an Amazon Redshift service-linked position—AWSServiceRoleForRedshift—as a read-only administrator in Lake Formation. You possibly can run following AWS Command Line Interface (AWS CLI) command so as to add the position.

Exchange along with your account quantity and substitute with the AWS Area that you’re utilizing. You possibly can run this command from AWS CloudShell or by way of AWS CLI configured in your atmosphere.

aws lakeformation put-data-lake-settings

–region

–data-lake-settings

‘{

“DataLakeAdmins”: ({“DataLakePrincipalIdentifier”:”arn:aws:iam:::position/Admin”}),

“ReadOnlyAdmins”:({“DataLakePrincipalIdentifier”:”arn:aws:iam:: :position/aws-service-role/redshift.amazonaws.com/AWSServiceRoleForRedshift”}),

“CreateDatabaseDefaultPermissions”:(),

“CreateTableDefaultPermissions”:(),

“Parameters”:{“CROSS_ACCOUNT_VERSION”:”4″,”SET_CONTEXT”:”TRUE”}

}’

You additionally must create or use an present Amazon Elastic Compute Cloud (Amazon EC2) key pair that can be used for SSH connections to cluster situations. For extra info, see Amazon EC2 key pairs.

The examples on this submit require the next AWS providers and options:

The CloudFormation template that follows creates the next sources:

An Amazon EMR 7.6.0 cluster with Apache Iceberg packages

An Amazon Redshift Serverless occasion

An AWS Identification and Entry Administration (IAM) occasion profile, service position, and safety teams

IAM roles with required insurance policies

Two IAM customers: nurse and analyst

Obtain the CloudFormation template, or you should utilize the Launch Stack button to routinely obtain it to your AWS atmosphere. Word that community routes are directed to 255.255.255.255/32 for safety causes. Exchange the routes along with your group’s IP addresses. Additionally enter your IP or VPN vary for Jupyter Pocket book entry within the SourceCidrForNotebook parameter in CloudFormation.

![]()

Obtain the diabetic encounters and affected person datasets and add it into your S3 bucket. These information are from a publicly accessible open dataset.

This pattern dataset is used to focus on this use case, the strategies lined might be tailored to your workflows. The next are extra particulars about this dataset:

diabetic_encounters_s3.csv: Incorporates details about affected person visits for diabetic therapy.

encounter_id: Distinctive quantity to check with an encounter with a affected person who has diabetes.

patient_nbr: Distinctive quantity to establish a affected person.

num_procedures: Variety of medical procedures administered.

num_medications: Variety of drugs offered throughout the go to

insulin: Insulin degree noticed. Legitimate values are regular, up, and no.

time_in_hospital: Period of time in hospital in days.

readmitted: Readmitted to hospital inside 30 days or after 30 days.

diabetic_patients_rs.csv: Incorporates affected person info akin to age group, gender, race, and variety of visits.

patient_nbr: Distinctive quantity to establish a affected person

race: Affected person’s race

gender: Affected person’s gender

age_grp: Affected person’s age group. Legitimate values are 0-10, 10-20, 20-30, and so forth

number_outpatient: Variety of outpatient visits

number_emergency: Variety of emergency room visits

number_inpatient: Variety of inpatient visits

Now that you simply’ve arrange the conditions, you’re prepared to attach Amazon Redshift to question Apache Iceberg knowledge saved in Amazon S3 Tables.

Create an S3 Desk bucket

Earlier than you should utilize Amazon Redshift to question the information in an Amazon S3 Desk, you have to create an Amazon S3 Desk.

Register to the AWS Administration Console and go to Amazon S3.

Go to Amazon S3 Desk buckets. That is an possibility within the Amazon S3 console.

Within the Desk buckets view, there’s a piece that describes Integration with AWS analytics providers. Select Allow Integration in case you haven’t beforehand set this up. This units up the mixing with AWS analytics providers, together with Amazon Redshift, Amazon EMR, and Amazon Athena.

Wait just a few seconds for the standing to vary to Enabled.

Select Create desk bucket and enter a bucket title. You should utilize any title that follows the naming conventions. On this instance, we used the bucket title patient-encounter. Once you’re completed, select Create desk bucket.

After the S3 Desk bucket is created, you’ll be redirected to the Desk buckets listing. Copy the Amazon Useful resource Title (ARN) of the desk bucket you simply created to make use of within the subsequent part.

Now that your S3 Desk bucket is about up, you possibly can load knowledge.

Create S3 Desk and cargo knowledge

The CloudFormation template within the conditions created an Apache Spark cluster utilizing Amazon EMR. You’ll use the Amazon EMR cluster to load knowledge into Amazon S3 Tables.

Connect with the Apache Spark main node utilizing SSH or by way of Jupyter Notebooks. Word that an Amazon EMR cluster was launched whenever you deployed the CloudFormation template.

Enter the next command to launch the Spark shell and initialize a Spark session for Iceberg that connects to your S3 Desk bucket. Exchange , and > with the knowledge your area, account and bucket title.

spark-shell

–packages “org.apache.iceberg:iceberg-spark-runtime-3.5_2.12:1.4.1,software program.amazon.awssdk:bundle:2.20.160,software program.amazon.awssdk:url-connection-client:2.20.160”

–master “native(*)”

–conf “spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions”

–conf “spark.sql.defaultCatalog=spark_catalog”

–conf “spark.sql.catalog.spark_catalog=org.apache.iceberg.spark.SparkCatalog”

–conf “spark.sql.catalog.spark_catalog.sort=relaxation”

–conf “spark.sql.catalog.spark_catalog.uri=https://s3tables..amazonaws.com/iceberg”

–conf “spark.sql.catalog.spark_catalog.warehouse=arn:aws:s3tables:::bucket/”

–conf “spark.sql.catalog.spark_catalog.relaxation.sigv4-enabled=true”

–conf “spark.sql.catalog.spark_catalog.relaxation.signing-name=s3tables”

–conf “spark.sql.catalog.spark_catalog.relaxation.signing-region=”

–conf “spark.sql.catalog.spark_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO”

–conf “spark.hadoop.fs.s3a.aws.credentials.supplier=org.apache.hadoop.fs.s3a.SimpleAWSCredentialProvider”

–conf “spark.sql.catalog.spark_catalog.rest-metrics-reporting-enabled=false”

See Accessing Amazon S3 Tables with Amazon EMR for upgrades to software program.amazon.s3tables package deal variations.

Subsequent, create a namespace that can hyperlink your S3 Desk bucket along with your Amazon Redshift Serverless workgroup. We selected encounters because the namespace for this instance, however you should utilize a distinct title. Use the next SparkSQL command:

spark.sql(“CREATE NAMESPACE IF NOT EXISTS s3tablesbucket.encounters”)

Create an Apache Iceberg desk with title diabetic_encounters.

spark.sql(

“”” CREATE TABLE IF NOT EXISTS s3tablesbucket.encounters.`diabetic_encounters` (

encounter_id INT,

patient_nbr INT,

num_procedures INT,

num_medications INT,

insulin STRING,

time_in_hospital INT,

readmitted STRING

)

USING iceberg “””

)

Load csv into the S3 Desk encounters.diabetic_encounters. Exchange with the Amazon S3 file path of the diabetic_encounters_s3.csv file you uploaded earlier.

val df = spark.learn.format(“csv”).possibility(“header”, “true”).possibility(“inferSchema”, “true”).load(” “)

df.writeTo(“s3tablesbucket.encounters.diabetic_encounters”).utilizing(“Iceberg”).tableProperty (“format-version”, “2”).createOrReplace()

Question the information to validate it utilizing Spark shell.

spark.sql(“”” SELECT * FROM s3tablesbucket.encounters.diabetic_encounters “””).present()

Grant permissions

On this part, you grant fine-grained entry management to the 2 IAM customers created as a part of the conditions.

nurse: Grant entry to all columns within the diabetic_encounters desk

analyst: Grant entry to solely {encounter_id, patient_nbr, readmitted} columns

First, grant entry to the diabetic_encounters desk for nurse consumer.

In AWS Lake Formation, Select Knowledge Permissions.

On the Grant Permissions web page, underneath Principals, choose IAM customers and roles.

Choose the IAM consumer nurse.

For Catalogs, choose :s3tablescatalog/patient-encounter.

For Databases, choose encounter

Scroll down. For Tables, choose diabetic_encounters.

For Desk permissions, choose Choose.

For Knowledge permissions, choose All knowledge entry.

Select Grant. This may grant choose entry on all of the columns in diabetic_encounters to the nurse

Now grant entry to the diabetic_encounters desk for the analyst consumer.

Repeat the identical steps that you simply adopted for nurse consumer as much as step 7 within the earlier part.

For Knowledge permissions, choose Column-based entry. Choose Embody columns and choose the encounter_id, patient_nbr, and readmitted columns

Select Grant. This may grant choose entry on the encounter_id, patient_nbr, and readmitted columns in diabetic_encounters to the analyst

Run SQL analytics

On this part, you’ll entry the information within the diabetic_encounters S3 Desk utilizing nurse and analyst to learn the way fine-grain entry management works. Additionally, you will mix knowledge from the S3 Desk knowledge with an area desk in Amazon Redshift utilizing a single question.

Within the Amazon Redshift Question Editor V2, hook up with serverless:rs-demo-wg, an Amazon Redshift Serverless occasion created by the CloudFormation template.

Choose Database consumer title and password because the connection methodology and join utilizing tremendous consumer awsuser. Present the password you gave as an enter parameter to the CloudFormation stack.

Run the next instructions to create the IAM customers nurse and analyst in Amazon Redshift.

CREATE USER IAM:nurse password disable;

CREATE USER IAM:analyst password disable;

Amazon Redshift routinely mounts the Knowledge Catalog as an exterior database named awsdatacatalog to simplify accessing your tables in Knowledge Catalog. You possibly can grant utilization entry to this database for the IAM customers:

GRANT USAGE ON DATABASE awsdatacatalog to “IAM:nurse”;

GRANT USAGE ON DATABASE awsdatacatalog to “IAM:analyst”;

For the following steps, you have to first check in to the AWS Console because the nurse IAM consumer. You’ll find the IAM consumer’s password within the AWS Secrets and techniques Supervisor console and retrieving the worth from the key ending with iam-users-credentials. See Get a secret worth utilizing the AWS console for extra info.

After you’ve signed in to the console, navigate to the Amazon Redshift Question Editor V2.

Register to your Amazon Redshift cluster utilizing the IAM:nurse. You are able to do this by connecting to serverless:rs-demo-wg as Federated consumer. This is applicable the permission offered in Lake Formation for accessing your knowledge in Amazon S3 Tables:

Run following SQL to question S3 Desk diabetic_encounters.

SELECT * FROM patient-encounter@s3tablescatalog”.”encounters”.”diabetic_encounters”;

This returns all the information within the S3 Desk for diabetic_encounters throughout each column within the desk, as proven within the following determine:

Recall that you simply additionally created an IAM consumer known as analyst that solely has entry to the encounter_id, patient_nbr, and readmitted columns. Let’s confirm that analyst consumer can solely entry these columns.

Register to the AWS console because the analyst IAM consumer and open the Amazon Redshift Question Editor v2 utilizing the identical steps as above. Run the identical question as earlier than:

SELECT * FROM patient-encounter@s3tablescatalog”.”encounters”.”diabetic_encounters”;

This time, it’s best to solely the encounter_id, patient_nbr, and readmitted columns:

Now that you simply’ve seen how one can entry knowledge in Amazon S3 Tables from Amazon Redshift whereas setting the degrees of entry required to your customers, let’s see how we will be a part of knowledge in S3 Tables to tables that exist already in Amazon Redshift.

Mix knowledge from an S3 Desk and an area desk in Amazon Redshift

For this part, you’ll load knowledge into your native Amazon Redshift cluster. After that is full, you possibly can analyze the datasets in each Amazon Redshift and S3 Tables.

First, because the analytics federated consumer, check in to your Amazon Redshift cluster utilizing Amazon Redshift Question Editor v2.

Use the next SQL command to create a desk that incorporates affected person info.:

CREATE TABLE public.patient_info (

patient_nbr integer ENCODE az64,

race character various(256) ENCODE lzo,

gender character various(256) ENCODE lzo,

age_grp character various(256) ENCODE lzo,

number_outpatient integer ENCODE az64,

number_emergency integer ENCODE az64,

number_inpatient integer ENCODE az64);

Copy affected person info from the file csv that’s saved in your Amazon S3 object bucket. Exchange with the situation of the file in your S3 bucket.

COPY dev.public.patient_info FROM ‘s3://’

IAM_ROLE default

FORMAT AS CSV DELIMITER ‘,’

IGNOREHEADER 1;

Use the next question to assessment the pattern knowledge to confirm that the command was profitable. This may present info from 10 sufferers, as proven within the following determine.

SELECT * FROM public.patient_info restrict 10;

Now mix knowledge from the Amazon S3 Desk diabetic_encounters and the Amazon Redshift patient_info. On this instance, the question fetches details about what age group was most ceaselessly readmitted to the hospital inside 30 days of an preliminary hospital go to:

SELECT

age_grp,

depend(*) readmission_count

FROM

“patient-encounter@s3tablescatalog”.”encounters”.”diabetic_encounters” a

JOIN public.patient_info b ON b.patient_nbr = a.patient_nbr

WHERE

a.readmitted='<30′

GROUP BY age_grp

ORDER BY readmission_count DESC

LIMIT 1;

This question returns outcomes exhibiting an age group and the variety of re-admissions, as proven within the following determine.

Cleanup

To wash up your sources, delete the stack you deployed utilizing AWS CloudFormation. For directions, see Deleting a stack on the AWS CloudFormation console.

Conclusion

On this submit, you walked by way of an end-to-end course of for establishing safety and governance controls for Apache Iceberg knowledge saved in Amazon S3 Tables and accessing it from Amazon Redshift. This contains creating S3 Tables, loading knowledge into them, registering the tables in a knowledge lake catalog, establishing entry controls, and querying the information utilizing Amazon Redshift. You additionally realized methods to mix knowledge from Amazon S3 Tables and native Amazon Redshift tables saved in Redshift Managed Storage in a single question, enabling a seamless, unified analytics expertise. Check out these options and see Working with Amazon S3 Tables and desk buckets for extra particulars. We welcome your suggestions within the feedback part.

Concerning the Authors

Satesh Sonti is a Sr. Analytics Specialist Options Architect based mostly out of Atlanta, specializing in constructing enterprise knowledge platforms, knowledge warehousing, and analytics options. He has over 19 years of expertise in constructing knowledge belongings and main complicated knowledge platform applications for banking and insurance coverage shoppers throughout the globe.

Satesh Sonti is a Sr. Analytics Specialist Options Architect based mostly out of Atlanta, specializing in constructing enterprise knowledge platforms, knowledge warehousing, and analytics options. He has over 19 years of expertise in constructing knowledge belongings and main complicated knowledge platform applications for banking and insurance coverage shoppers throughout the globe.

Jonathan Katz is a Principal Product Supervisor – Technical on the Amazon Redshift workforce and relies in New York. He’s a Core Group member of the open supply PostgreSQL venture and an energetic open supply contributor, together with PostgreSQL and the pgvector venture.

Jonathan Katz is a Principal Product Supervisor – Technical on the Amazon Redshift workforce and relies in New York. He’s a Core Group member of the open supply PostgreSQL venture and an energetic open supply contributor, together with PostgreSQL and the pgvector venture.