Need smarter insights in your inbox? Join our weekly newsletters to get solely what issues to enterprise AI, information, and safety leaders. Subscribe Now

OpenAI’s long-awaited return to the “open” of its namesake occurred yesterday with the discharge of two new giant language fashions (LLMs): gpt-oss-120B and gpt-oss-20B.

However regardless of attaining technical benchmarks on par with OpenAI’s different highly effective proprietary AI mannequin choices, the broader AI developer and person neighborhood’s preliminary response has up to now been everywhere in the map. If this launch have been a film premiering and being graded on Rotten Tomatoes, we’d be a close to 50% cut up, based mostly on my observations.

First some background: OpenAI has launched these two new text-only language fashions (no picture technology or evaluation) each below the permissive open supply Apache 2.0 license — the primary time since 2019 (earlier than ChatGPT) that the corporate has performed so with a cutting-edge language mannequin.

The complete ChatGPT period of the final 2.7 years has up to now been powered by proprietary or closed-source fashions, ones that OpenAI managed and that customers needed to pay to entry (or use a free tier topic to limits), with restricted customizability and no technique to run them offline or on non-public computing {hardware}.

AI Scaling Hits Its Limits

Energy caps, rising token prices, and inference delays are reshaping enterprise AI. Be part of our unique salon to find how high groups are:

Turning power right into a strategic benefit

Architecting environment friendly inference for actual throughput positive aspects

Unlocking aggressive ROI with sustainable AI techniques

Safe your spot to remain forward: https://bit.ly/4mwGngO

However that every one modified due to the discharge of the pair of gpt-oss fashions yesterday, one bigger and extra highly effective to be used on a single Nvidia H100 GPU at say, a small or medium-sized enterprise’s information heart or server farm, and a good smaller one which works on a single client laptop computer or desktop PC like the sort in your house workplace.

After all, the fashions being so new, it’s taken a number of hours for the AI energy person neighborhood to independently run and check them out on their very own particular person benchmarks (measurements) and duties.

And now we’re getting a wave of suggestions starting from optimistic enthusiasm in regards to the potential of those highly effective, free, and environment friendly new fashions to an undercurrent of dissatisfaction and dismay with what some customers see as vital issues and limitations, particularly in comparison with the wave of equally Apache 2.0-licensed highly effective open supply, multimodal LLMs from Chinese language startups (which may also be taken, custom-made, run domestically on U.S. {hardware} without cost by U.S. firms, or firms anyplace else all over the world).

Excessive benchmarks, however nonetheless behind Chinese language open supply leaders

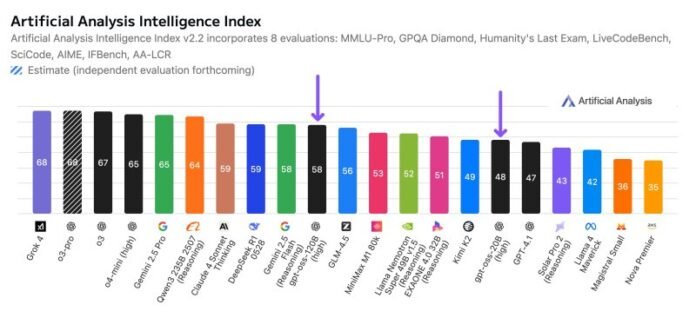

Intelligence benchmarks place the gpt-oss fashions forward of most American open-source choices. In line with impartial third-party AI benchmarking agency Synthetic Evaluationgpt-oss-120B is “essentially the most clever American open weights mannequin,” although it nonetheless falls in need of Chinese language heavyweights like DeepSeek R1 and Qwen3 235B.

“On reflection, that’s all they did. Mogged on benchmarks,” wrote self-proclaimed DeepSeek “stan” @teortaxesTex. “No good by-product fashions shall be skilled… No new usecases created… Barren declare to bragging rights.”

That skepticism is echoed by pseudonymous open supply AI Researcher Teknium (@Teknium1)co-founder of rival open supply AI mannequin supplier Nous Analysiswho known as the discharge “a professional nothing burger,” on X, and predicted a Chinese language mannequin will quickly eclipse it. “General very disillusioned and I legitimately got here open minded to this,” they wrote.

Bench-maxxing on math and coding on the expense of writing?

Different criticism centered on the gpt-oss fashions’ obvious slender usefulness.

Influenced “Oral al supernatural (@scaling01)” famous that the fashions excel at math and coding however “utterly lack style and customary sense.” He added, “So it’s only a math mannequin?”

In artistic writing checks, some customers discovered the mannequin injecting equations into poetic outputs. “That is what occurs whenever you benchmarkmax,” Teknium remarkedsharing a screenshot the place the mannequin added an integral formulation mid-poem.

And @kalamazea researcher at decentralized AI mannequin coaching firm Prime Mindwrote that “gpt-oss-120b is aware of much less in regards to the world than what an excellent 32b does. most likely needed to keep away from copyright points in order that they seemingly pretrained on majority synth. fairly devastating stuff”

Former Googler and impartial AI developer Kyle Corbitt agreed that the gpt-oss pair of fashions appeared to have been skilled totally on artificial information — that’s, information generated by an AI mannequin particularly for the needs of coaching a brand new one — making it “extraordinarily spiky.”

It’s “nice on the duties it’s skilled on, actually unhealthy at the whole lot else,” Corbitt wrote, i.e., nice on coding and math issues, and unhealthy at extra linguistic duties like artistic writing or report technology.

In different phrases, the cost is that OpenAI intentionally skilled the mannequin on extra artificial information than actual world details and figures to keep away from utilizing copyrighted information scraped from web sites and different repositories it doesn’t personal or have license to make use of, which is one thing it and plenty of different main gen AI firms have been accused of up to now and are going through down ongoing lawsuits because of.

Others speculated OpenAI might have skilled the mannequin on primarily artificial information to keep away from security and safety pointsleading to worse high quality than if it had been skilled on extra actual world (and presumably copyrighted) information.

Regarding third-party benchmark outcomes

Furthermore, evaluating the fashions on third-party benchmarking checks have turned up regarding metrics in some customers’ eyes.

SpeechMap — which measures the efficiency of LLMs in complying with person prompts to generate disallowed, biased, or politically delicate outputs — confirmed compliance scores for gpt-oss 120B hovering below 40%close to the underside of peer open fashions, which signifies resistance to observe person requests and defaulting to guardrails, probably on the expense of offering correct info.

In Assist’s polyglot analysisgpt-oss-120B scored simply 41.8% in multilingual reasoning—far under opponents like Kimi-K2 (59.1%) and DeepSeek-R1 (56.9%).

Some customers additionally stated their checks indicated the mannequin is oddly immune to producing criticism of China or Russia, a distinction to its therapy of the US and EU, elevating questions on bias and coaching information filtering.

Different consultants have applauded the discharge and what it indicators for U.S. open supply AI

To be honest, not all of the commentary is unfavorable. Software program engineer and shut AI watcher Simon Willison known as the discharge “actually spectacular” on X, elaborating in a weblog publish on the fashions’ effectivity and talent to attain parity with OpenAI’s proprietary o3-mini and o4-mini fashions.

He praised their sturdy efficiency on reasoning and STEM-heavy benchmarks, and hailed the brand new “Concord” immediate template format — which presents builders extra structured phrases for guiding mannequin responses — and help for third-party software use as significant contributions.

In a prolonged X publishClem Delangue, CEO and co-founder of AI code sharing and open supply neighborhood Hugging Faceinspired customers to not rush to judgment, stating that inference for these fashions is complicated, and early points could possibly be attributable to infrastructure instability and inadequate optimization amongst internet hosting suppliers.

“The ability of open-source is that there’s no dishonest,” Delangue wrote. “We’ll uncover all of the strengths and limitations… progressively.”

Much more cautious was Wharton College of Enterprise on the College of Pennsylvania professor Ethan Mollick, who wrote on X that “The US now seemingly has the main open weights fashions (or near it)”, however questioned whether or not this can be a one-off by OpenAI. “The lead will evaporate rapidly as others catch up,” he famous, including that it’s unclear what incentives OpenAI has to maintain the fashions up to date.

Nathan Lambert, a number one AI researcher on the rival open supply lab Allen Institute for AI (Ai2) and commentator, praised the symbolic significance of the discharge on his weblog Interconnectscalling it “an exceptional step for the open ecosystem, particularly for the West and its allies, that essentially the most recognized model within the AI area has returned to brazenly releasing fashions.”

However he cautioned on X that gpt-oss is “unlikely to meaningfully decelerate (Chinese language e-commerce big Aliaba’s AI staff) Qwen,” citing its usability, efficiency, and selection.

He argued the discharge marks an necessary shift within the U.S. towards open fashions, however that OpenAI nonetheless has a “lengthy path again” to catch up in observe.

A cut up verdict

The decision, for now, is cut up.

OpenAI’s gpt-oss fashions are a landmark by way of licensing and accessibility.

However whereas the benchmarks look stable, the real-world “vibes” — as many customers describe it — are proving much less compelling.

Whether or not builders can construct sturdy functions and derivatives on high of gpt-oss will decide whether or not the discharge is remembered as a breakthrough or a blip.

Day by day insights on enterprise use circumstances with VB Day by day

If you wish to impress your boss, VB Day by day has you lined. We provide the inside scoop on what firms are doing with generative AI, from regulatory shifts to sensible deployments, so you may share insights for max ROI.

Thanks for subscribing. Take a look at extra VB newsletters right here.

An error occured.