Knowledge environments in data-driven organizations are altering to satisfy the rising calls for for analytics, together with enterprise intelligence (BI) dashboarding, one-time querying, knowledge science, machine studying (ML), and generative AI. These organizations have an enormous demand for lakehouse options that mix the perfect of knowledge warehouses and knowledge lakes to simplify knowledge administration with quick access to all knowledge from their most well-liked engines.

Amazon SageMaker Lakehouse unifies all of your knowledge throughout Amazon Easy Storage Service (Amazon S3) knowledge lakes and Amazon Redshift knowledge warehouses, serving to you construct highly effective analytics and synthetic intelligence and machine studying (AI/ML) purposes on a single copy of knowledge. SageMaker Lakehouse offers you the pliability to entry and question your knowledge in place with all Apache Iceberg suitable instruments and engines. It secures your knowledge within the lakehouse by defining fine-grained permissions, that are persistently utilized throughout all analytics and ML instruments and engines. You’ll be able to convey knowledge from operational databases and purposes into your lakehouse in close to actual time by zero-ETL integrations. It accesses and queries knowledge in-place with federated question capabilities throughout third-party knowledge sources by Amazon Athena.

With SageMaker Lakehouse, you possibly can entry tables saved in Amazon Redshift managed storage (RMS) by Iceberg APIs, utilizing the Iceberg REST catalog backed by AWS Glue Knowledge Catalog. This expands your knowledge integration workload throughout knowledge lakes and knowledge warehouses, enabling seamless entry to various knowledge sources.

Amazon SageMaker Unified Studio, Amazon EMR 7.5.0 and better, and AWS Glue 5.0 natively assist SageMaker Lakehouse. This publish describes how one can combine knowledge on RMS tables by Apache Spark utilizing SageMaker Unified Studio, Amazon EMR 7.5.0 and better, and AWS Glue 5.0.

Find out how to entry RMS tables by Apache Spark on AWS Glue and Amazon EMR

With SageMaker Lakehouse, RMS tables are accessible by the Apache Iceberg REST catalog. Open supply engines resembling Apache Spark are suitable with Apache Iceberg, and so they can work together with RMS tables by configuring this Iceberg REST catalog. You’ll be able to study extra in Connecting to the Knowledge Catalog utilizing AWS Glue Iceberg REST extension endpoint.

Observe that the Iceberg REST extensions endpoint is used while you entry RMS tables. This endpoint is accessible by the Apache Iceberg AWS Glue Knowledge Catalog extensions, which comes preinstalled on AWS Glue 5.0 and Amazon EMR 7.5.0 or greater. The extension library allows entry to RMS tables utilizing the Amazon Redshift connector for Apache Spark.

To entry RMS backed catalog databases from Spark, every RMS database requires its personal Spark session catalog configuration. Listed here are the required Spark configurations:

Spark config key

Worth

spark.sql.catalog.{catalog_name}

org.apache.iceberg.spark.SparkCatalog

spark.sql.catalog.{catalog_name}.sort

glue

spark.sql.catalog.{catalog_name}.glue.id

{account_id}:{rms_catalog_name}/{database_name}

spark.sql.catalog.{catalog_name}.consumer.area

{aws_region}

spark.sql.extensions

org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions

Configuration parameters:

{catalog_name}: Your chosen identify for referencing the RMS catalog database in your software code

{rms_catalog_name}: The RMS catalog identify as proven within the AWS Lake Formation catalogs part

{database_name}: The RMS database identify

{aws_region}: The AWS Area the place the RMS catalog is situated

For a deeper understanding of how the Amazon Redshift hierarchy (databases, schemas, and tables) is mapped to the AWS Glue multilevel catalogs, you possibly can check with the Bringing Amazon Redshift knowledge into the AWS Glue Knowledge Catalog documentation.

Within the following part, we exhibit how one can entry RMS tables by Apache Spark utilizing SageMaker Unified Studio JupyterLab notebooks with the AWS Glue 5.0 runtime and Amazon EMR Serverless.

Though we are able to convey current Amazon Redshift tables into the AWS Glue Knowledge catalog by making a Lakehouse Redshift catalog from an current Redshift namespace and supply entry to a SageMaker Unified Studio mission, within the following instance, you’ll create a managed Amazon Redshift Lakehouse catalog instantly from SageMaker Unified Studio and work with that.

Conditions

To observe these directions, you will need to have the next conditions:

Create a SageMaker Unified Studio mission

Full the next steps to create a SageMaker Unified Studio mission:

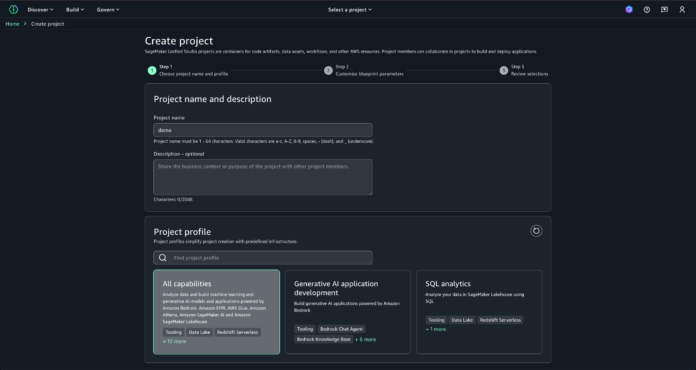

Register to SageMaker Unified Studio.

Select Choose a mission on the highest menu and select Create mission.

For Challenge identify, enter demo.

For Challenge profile, select All capabilities.

Select Proceed.

Go away the default values and select Proceed.

Evaluation the configurations and select Create mission.

It is advisable to look forward to the mission to be created. Challenge creation can take about 5 minutes. When the mission standing adjustments to Lively, choose the mission identify to entry the mission’s dwelling web page.

Make notice of the Challenge function ARN since you’ll want it for subsequent steps.

You’ve efficiently created the mission and famous the mission function ARN. The subsequent step is to configure a Lakehouse catalog on your RMS.

Configure a Lakehouse catalog on your RMS

Full the next steps to configure a Lakehouse catalog on your RMS:

Within the navigation pane, select Knowledge.

Select the + (plus) signal.

Choose Create Lakehouse catalog to create a brand new catalog and select Subsequent.

For Lakehouse catalog identify, enter rms-catalog-demo.

Select Add catalog.

Await the catalog to be created.

In SageMaker Unified Studio, select Knowledge within the left navigation pane, then choose the three vertical dots subsequent to Redshift (Lakehouse) and select Refresh to verify the Amazon Redshift compute is energetic.

Create a brand new desk within the RMS Lakehouse catalog:

In SageMaker Unified Studio, on the highest menu, underneath Construct, select Question Editor.

On the highest proper, select Choose knowledge supply.

For CONNECTIONS, select Redshift (Lakehouse).

For DATABASES, select dev@rms-catalog-demo.

For SCHEMAS, select public.

Select Select.

Within the question cell, enter and execute the next question to create a brand new schema:

create schema “dev@rms-catalog-demo”.salesdb

In a brand new cell, enter and execute the next question to create a brand new desk:

create desk salesdb.store_sales (ss_sold_timestamp timestamp, ss_item textual content, ss_sales_price float);

In a brand new cell, enter and execute the next question to populate the desk with pattern knowledge:

insert into salesdb.store_sales values (‘2024-12-01T09:00:00Z’, ‘Product 1’, 100.0),

(‘2024-12-01T11:00:00Z’, ‘Product 2’, 500.0),

(‘2024-12-01T15:00:00Z’, ‘Product 3’, 20.0),

(‘2024-12-01T17:00:00Z’, ‘Product 4’, 1000.0),

(‘2024-12-01T18:00:00Z’, ‘Product 5’, 30.0),

(‘2024-12-02T10:00:00Z’, ‘Product 6’, 5000.0),

(‘2024-12-02T16:00:00Z’, ‘Product 7’, 5.0);

In a brand new cell, enter and run the next question to confirm the desk contents:

choose * from salesdb.store_sales;

(Non-compulsory) Create an Amazon EMR Serverless software

IMPORTANT: This part is barely required when you plan to check additionally utilizing Amazon EMR Serverless. If you happen to intend to make use of AWS Glue completely, you possibly can skip this part solely.

Navigate to the mission web page. Within the left navigation pane, choose Compute, then choose the Knowledge processing Select Add compute.

Select Create new compute sources, then select Subsequent.

Choose EMR Serverless.

Specify emr_serverless_application as Compute identify, choose Compatibility as Permission mode, and select Add compute.

Monitor the deployment progress. Await the Amazon EMR Serverless software to finish its deployment. This course of can take a minute.

Entry Amazon Redshift Managed Storage tables by Apache Spark

On this part, we exhibit how one can question tables saved in RMS utilizing a SageMaker Unified Studio pocket book.

Within the navigation pane, select Knowledge

Below Lakehouse, choose the down arrow subsequent to rms-catalog-demo

Below dev, choose the down arrow subsequent salesdb, select store_sales, and select the three dots

SageMaker Lakehouse offers a number of evaluation choices: Question with Athena, Question with Redshift, and Open in Jupyter Lab pocket book.

Select Open in Jupyter Lab pocket book

On the Launcher tab, select Python 3 (ipykernel)

In SageMaker Unified Studio JupyterLab, you possibly can specify totally different compute varieties for every pocket book cell. Though this instance demonstrates utilizing AWS Glue compute (mission.spark.compatibility), the identical code will be executed utilizing Amazon EMR Serverless by choosing the suitable compute within the cell settings. The next desk reveals the connection sort and compute values to specify when working PySpark code or Spark SQL code with totally different engines:

Compute choice

Pyspark code

Spark SQL

Connection sort

Compute

Connection sort

Compute

AWS Glue

Pyspark

mission.spark.compatibility

SQL

mission.spark.compatibility

Amazon EMR Serverless

Pyspark

emr-s.emr_serverless_application

SQL

emr-s.emr_serverless_application

Within the pocket book cell’s prime left nook, set Connection Kind to PySpark and choose spark.compatibility (AWS Glue 5.0) as Compute

Execute the next code to initialize the SparkSession and configure rmscatalog because the session catalog for accessing the dev database underneath the rms-catalog-demo RMS catalog:

from pyspark.sql import SparkSession

catalog_name = “rmscatalog”

#Change together with your AWS account ID

rms_catalog_id = “:rms-catalog-demo/dev”

#Change together with your AWS area

aws_region=”us-east-2″

spark = SparkSession.builder.appName(‘rms_demo’)

.config(f’spark.sql.catalog.{catalog_name}’, ‘org.apache.iceberg.spark.SparkCatalog’)

.config(f’spark.sql.catalog.{catalog_name}.sort’, ‘glue’)

.config(f’spark.sql.catalog.{catalog_name}.glue.id’, rms_catalog_id)

.config(f’spark.sql.catalog.{catalog_name}.consumer.area’, aws_region)

.config(‘spark.sql.extensions’,’org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions’)

.getOrCreate()

Create a brand new cell and swap the connection sort from PySpark to SQL to execute Spark SQL instructions instantly

Enter the next SQL assertion to view all tables underneath salesdb (RMS schema) inside rmscatalog:

SHOW TABLES IN rmscatalog.salesdb

In a brand new SQL cell, enter the next DESCRIBE EXTENDED assertion to view detailed details about the store_sales desk within the salesdb schema:

DESCRIBE EXTENDED rmscatalog.salesdb.store_sales

Within the output, you’ll observe that the Supplier is ready to iceberg. This means that the desk is acknowledged as an Iceberg desk, regardless of being saved in Amazon Redshift managed storage.

In a brand new SQL cell, enter the next SELECT assertion to view the content material of the desk

SELECT * FROM rmscatalog.salesdb.store_sales

All through this instance, we demonstrated how one can create a desk in Amazon Redshift Serverless and seamlessly question it as an Iceberg desk utilizing Apache Spark inside a SageMaker Unified Studio pocket book.

Clear up

To keep away from incurring future costs, clear up all created sources:

Delete the created SageMaker Unified Studio mission. This step will robotically delete Amazon EMR compute (for instance, the Amazon EMR Serverless software) that was provisioned from the mission:

Inside SageMaker Studio, navigate to the demo mission’s Challenge overview part.

Select Actions, then choose Delete mission.

Kind affirm and select Delete mission.

Delete the created Lakehouse catalog:

Navigate to the AWS Lake Formation web page within the Catalogs part.

Choose the rms-catalog-demo catalog, select Actions, then choose Delete.

Within the affirmation window sort rms-catalog-demo after which select Drop.

Conclusion

On this publish, we demonstrated how one can use Apache Spark to work together with Amazon Redshift Managed Storage tables by Amazon SageMaker Lakehouse utilizing the Iceberg REST catalog. This integration offers a unified view of your knowledge throughout Amazon S3 knowledge lakes and Amazon Redshift knowledge warehouses, so you possibly can construct highly effective analytics and AI/ML purposes whereas sustaining a single copy of your knowledge.

For added workloads and implementations, go to Simplify knowledge entry on your enterprise utilizing Amazon SageMaker Lakehouse.

Concerning the Authors

Noritaka Sekiyama is a Principal Large Knowledge Architect with Amazon Internet Providers (AWS) Analytics providers. He’s liable for constructing software program artifacts to assist clients. In his spare time, he enjoys biking on his street bike.

Noritaka Sekiyama is a Principal Large Knowledge Architect with Amazon Internet Providers (AWS) Analytics providers. He’s liable for constructing software program artifacts to assist clients. In his spare time, he enjoys biking on his street bike.

Stefano Sandonà is a Senior Large Knowledge Specialist Answer Architect at Amazon Internet Providers (AWS). Keen about knowledge, distributed techniques, and safety, he helps clients worldwide architect high-performance, environment friendly, and safe knowledge options.

Stefano Sandonà is a Senior Large Knowledge Specialist Answer Architect at Amazon Internet Providers (AWS). Keen about knowledge, distributed techniques, and safety, he helps clients worldwide architect high-performance, environment friendly, and safe knowledge options.

Derek Liu is a Senior Options Architect based mostly out of Vancouver, BC. He enjoys serving to clients clear up huge knowledge challenges by Amazon Internet Providers (AWS) analytic providers.

Derek Liu is a Senior Options Architect based mostly out of Vancouver, BC. He enjoys serving to clients clear up huge knowledge challenges by Amazon Internet Providers (AWS) analytic providers.

Raj Ramasubbu is a Senior Analytics Specialist Options Architect centered on huge knowledge and analytics and AI/ML with Amazon Internet Providers (AWS). He helps clients architect and construct extremely scalable, performant, and safe cloud-based options on AWS. Raj supplied technical experience and management in constructing knowledge engineering, huge knowledge analytics, enterprise intelligence, and knowledge science options for over 18 years previous to becoming a member of AWS. He helped clients in numerous trade verticals like healthcare, medical units, life science, retail, asset administration, automobile insurance coverage, residential REIT, agriculture, title insurance coverage, provide chain, doc administration, and actual property.

Raj Ramasubbu is a Senior Analytics Specialist Options Architect centered on huge knowledge and analytics and AI/ML with Amazon Internet Providers (AWS). He helps clients architect and construct extremely scalable, performant, and safe cloud-based options on AWS. Raj supplied technical experience and management in constructing knowledge engineering, huge knowledge analytics, enterprise intelligence, and knowledge science options for over 18 years previous to becoming a member of AWS. He helped clients in numerous trade verticals like healthcare, medical units, life science, retail, asset administration, automobile insurance coverage, residential REIT, agriculture, title insurance coverage, provide chain, doc administration, and actual property.

Angel Conde Manjon is a Sr. EMEA Knowledge & AI PSA, based mostly in Madrid. He has beforehand labored on analysis associated to knowledge analytics and AI in various European analysis tasks. In his present function, Angel helps companions develop companies centered on knowledge and AI.

Angel Conde Manjon is a Sr. EMEA Knowledge & AI PSA, based mostly in Madrid. He has beforehand labored on analysis associated to knowledge analytics and AI in various European analysis tasks. In his present function, Angel helps companions develop companies centered on knowledge and AI.

Appendix: Pattern script for Lake Formation FGAC enabled Spark cluster

If you wish to entry RMS tables from Lake Formation FGAC enabled Spark cluster on AWS Glue or Amazon EMR, check with the next code instance:

from pyspark.sql import SparkSession

catalog_name = “rmscatalog”

rms_catalog_name = “123456789012:rms-catalog-demo/dev”

account_id = “123456789012”

area = “us-east-2”

spark = SparkSession.builder.appName(‘rms_demo’)

.config(‘spark.sql.defaultCatalog’, catalog_name)

.config(f’spark.sql.catalog.{catalog_name}’, ‘org.apache.iceberg.spark.SparkCatalog’)

.config(f’spark.sql.catalog.{catalog_name}.sort’, ‘glue’)

.config(f’spark.sql.catalog.{catalog_name}.glue.id’, rms_catalog_name)

.config(f’spark.sql.catalog.{catalog_name}.consumer.area’, area)

.config(f’spark.sql.catalog.{catalog_name}.glue.account-id’, account_id)

.config(f’spark.sql.catalog.{catalog_name}.glue.catalog-arn’,f’arn:aws:glue:{area}:{rms_catalog_name}’)

.config(‘spark.sql.extensions’,’org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions’)

.getOrCreate()

I love how you write—it’s like having a conversation with a good friend. Can’t wait to read more!This post pulled me in from the very first sentence. You have such a unique voice!Seriously, every time I think I’ll just skim through, I end up reading every word. Keep it up!Your posts always leave me thinking… and wanting more. This one was no exception!Such a smooth and engaging read—your writing flows effortlessly. Big fan here!Every time I read your work, I feel like I’m right there with you. Beautifully written!You have a real talent for storytelling. I couldn’t stop reading once I started.The way you express your thoughts is so natural and compelling. I’ll definitely be back for more!Wow—your writing is so vivid and alive. It’s hard not to get hooked!You really know how to connect with your readers. Your words resonate long after I finish reading.

Looks like my earlier comment didn’t appear, but I just wanted to say—your blog is so inspiring! I’m still figuring things out as a beginner,and reading your posts makes me want to keep going with my own writing journey.