Be part of our each day and weekly newsletters for the most recent updates and unique content material on industry-leading AI protection. Be taught Extra

The complete AI panorama shifted again in January 2025 after a then little-known Chinese language AI startup DeepSeek (a subsidiary of the Hong Kong-based quantitative evaluation agency Excessive-Flyer Capital Administration) launched its highly effective open supply language reasoning mannequin DeepSeek R1 publicly to the world, besting U.S. giants reminiscent of Meta.

As DeepSeek utilization unfold quickly amongst researchers and enterprises, Meta was reportedly despatched into panic mode upon studying that this new R1 mannequin had been skilled for a fraction of the price of many different main fashions but outclassed them for as little as a number of million {dollars} — what it pays a few of its personal AI crew leaders.

Meta’s complete generative AI technique had till that time been predicated on releasing best-in-class open supply fashions below its model identify “Llama” for researchers and corporations to construct upon freely (no less than, if that they had fewer than 700 million month-to-month customers, at which level they’re alleged to contact Meta for particular paid licensing phrases).

But DeepSeek R1’s astonishingly good efficiency on a much smaller funds had allegedly shaken the corporate management and compelled some type of reckoning, with the final model of Llama, 3.3having been launched only a month prior in December 2024 but already trying outdated.

Now we all know the fruits of that reckoning: in the present day, Meta founder and CEO Mark Zuckerberg took to his Instagram account to introduced a new Llama 4 sequence of fashionswith two of them — the 400-billion parameter Llama 4 Maverick and 109-billion parameter Llama 4 Scout — accessible in the present day for builders to obtain and start utilizing or fine-tuning now on llama.com and AI code sharing group Hugging Face.

A large 2-trillion parameter Llama 4 Behemoth can also be being previewed in the present day, although Meta’s weblog publish on the releases mentioned it was nonetheless being skilled, and gave no indication of when it could be launched. (Recall parameters discuss with the settings that govern the mannequin’s habits and that typically extra imply a extra highly effective and complicated throughout mannequin.)

One headline characteristic of those fashions is that they’re all multimodal — skilled on, and subsequently, able to receiving and producing textual content, video, and imagery (hough audio was not talked about).

One other is that they’ve extremely lengthy context home windows — 1 million tokens for Llama 4 Maverick and 10 million for Llama 4 Scout — which is equal to about 1,500 and 15,000 pages of textual content, respectively, all of which the mannequin can deal with in a single enter/output interplay. Which means a person may theoretically add or paste as much as 7,500 pages-worth-of textual content and obtain that a lot in return from Llama 4 Scout, which might be helpful for information-dense fields reminiscent of drugs, science, engineering, arithmetic, literature and many others.

Right here’s what else we’ve discovered about this launch up to now:

All-in on mixture-of-experts

All three fashions use the “mixture-of-experts (MoE)” structure method popularized in earlier mannequin releases from OpenAI and Mistral, which primarily combines a number of smaller fashions specialised (“specialists”) in numerous duties, topics and media codecs right into a unified complete, bigger mannequin. Every Llama 4 launch is alleged to be subsequently a mix of 128 completely different specialists, and extra environment friendly to run as a result of solely the knowledgeable wanted for a selected job, plus a “shared” knowledgeable, handles every token, as an alternative of the whole mannequin having to run for every one.

Because the Llama 4 weblog publish notes:

In consequence, whereas all parameters are saved in reminiscence, solely a subset of the whole parameters are activated whereas serving these fashions. This improves inference effectivity by reducing mannequin serving prices and latency—Llama 4 Maverick could be run on a single (Nvidia) H100 DGX host for simple deployment, or with distributed inference for max effectivity.

Each Scout and Maverick can be found to the general public for self-hosting, whereas no hosted API or pricing tiers have been introduced for official Meta infrastructure. As a substitute, Meta focuses on distribution via open obtain and integration with Meta AI in WhatsApp, Messenger, Instagram, and net.

Meta estimates the inference price for Llama 4 Maverick at $0.19 to $0.49 per 1 million tokens (utilizing a 3:1 mix of enter and output). This makes it considerably cheaper than proprietary fashions like GPT-4o, which is estimated to price $4.38 per million tokens, primarily based on group benchmarks.

All three Llama 4 fashions—particularly Maverick and Behemoth—are explicitly designed for reasoning, coding, and step-by-step drawback fixing — although they don’t seem to exhibit the chains-of-thought of devoted reasoning fashions such because the OpenAI “o” sequence, nor DeepSeek R1.

As a substitute, they appear designed to compete extra immediately with “classical,” non-reasoning LLMs and multimodal fashions reminiscent of OpenAI’s GPT-4o and DeepSeek’s V3 — apart from Llama 4 Behemoth, which does seem to threaten DeepSeek R1 (extra on this under!)

As well as, for Llama 4, Meta constructed customized post-training pipelines centered on enhancing reasoning, reminiscent of:

Eradicating over 50% of “straightforward” prompts throughout supervised fine-tuning.

Adopting a steady reinforcement studying loop with progressively more durable prompts.

Utilizing move@okay analysis and curriculum sampling to strengthen efficiency in math, logic, and coding.

Implementing MetaP, a brand new approach that lets engineers tune hyperparameters (like per-layer studying charges) on fashions and apply them to different mannequin sizes and forms of tokens whereas preserving the supposed mannequin habits.

MetaP is of specific curiosity because it might be used going ahead to set hyperparameters on on mannequin after which get many different forms of fashions out of it, rising coaching effectivity.

As my VentureBeat colleague and LLM knowledgeable Ben Dickson opined ont the brand new MetaP approach: “This may save quite a lot of money and time. It implies that they run experiments on the smaller fashions as an alternative of doing them on the large-scale ones.”

That is particularly vital when coaching fashions as massive as Behemoth, which makes use of 32K GPUs and FP8 precision, reaching 390 TFLOPs/GPU over greater than 30 trillion tokens—greater than double the Llama 3 coaching information.

In different phrases: the researchers can inform the mannequin broadly how they need it to behave, and apply this to bigger and smaller model of the mannequin, and throughout completely different types of media.

A strong – however not but probably the most highly effective — mannequin household

In his announcement video on Instagram (a Meta subsidiary, naturally), Meta CEO Mark Zuckerberg mentioned that the corporate’s “objective is to construct the world’s main AI, open supply it, and make it universally accessible so that everybody on this planet advantages…I’ve mentioned for some time that I feel open supply AI goes to change into the main fashions, and with Llama 4, that’s beginning to occur.”

It’s a clearly fastidiously worded assertion, as is Meta’s weblog publish calling Llama 4 Scout, “one of the best multimodal mannequin on this planet in its class and is extra highly effective than all earlier technology Llama fashions,” (emphasis added by me).

In different phrases, these are very highly effective fashions, close to the highest of the heap in comparison with others of their parameter-size class, however not essentially setting new efficiency information. Nonetheless, Meta was eager to trumpet the fashions its new Llama 4 household beats, amongst them:

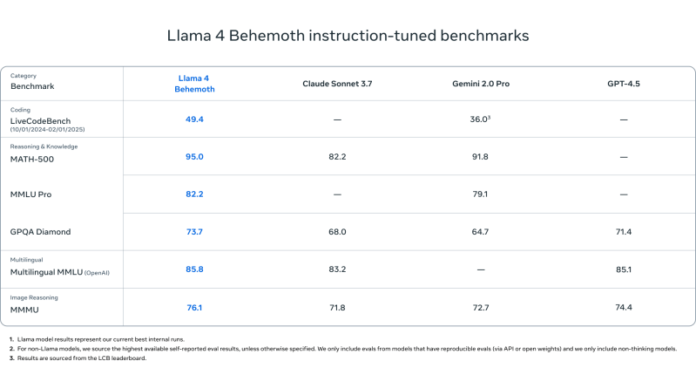

Llama 4 Behemoth

Outperforms GPT-4.5, Gemini 2.0 Professional, and Claude Sonnet 3.7 on:

MATH-500 (95.0)

GPQA Diamond (73.7)

MMLU Professional (82.2)

Name 4 maverick

Beats GPT-4o and Gemini 2.0 Flash on most multimodal reasoning benchmarks:

ChartQA, DocVQA, MathVista, MMMU

Aggressive with DeepSeek v3.1 (45.8B params) whereas utilizing lower than half the lively parameters (17B)

Benchmark scores:

ChartQA: 90.0 (vs. GPT-4o’s 85.7)

DocVQA: 94.4 (vs. 92.8)

MMLU Professional: 80.5

Price-effective: $0.19–$0.49 per 1M tokens

Name 4 scout

Matches or outperforms fashions like Mistral 3.1, Gemini 2.0 Flash-Lite, and Gemma 3 on:

DocVQA: 94.4

MMLU Professional: 74.3

MathVista: 70.7

Unmatched 10M token context size—very best for lengthy paperwork, codebases, or multi-turn evaluation

Designed for environment friendly deployment on a single H100 GPU

However in spite of everything that, how does Llama 4 stack as much as DeepSeek?

However after all, there are a complete different class of reasoning-heavy fashions reminiscent of DeepSeek R1, OpenAI’s “o” sequence (like GPT-4o), Gemini 2.0, and Claude Sonnet.

Utilizing the highest-parameter mannequin benchmarked—Llama 4 Behemoth—and evaluating it to the intial DeepSeek R1 launch chart for R1-32B and OpenAI o1 fashions, right here’s how Llama 4 Behemoth stacks up:

BenchmarkLlama 4 BehemothDeepSeek R1OpenAI o1-1217MATH-50095.097.396.4GPQA Diamond73.771.575.7MMLU82.290.891.8

What can we conclude?

MATH-500: Llama 4 Behemoth is barely behind DeepSeek R1 and OpenAI o1.

GPQA Diamond: Behemoth is forward of DeepSeek R1, however behind OpenAI o1.

MMLU: Behemoth trails each, however nonetheless outperforms Gemini 2.0 Professional and GPT-4.5.

Takeaway: Whereas DeepSeek R1 and OpenAI o1 edge out Behemoth on a pair metrics, Llama 4 Behemoth stays extremely aggressive and performs at or close to the highest of the reasoning leaderboard in its class.

Security and fewer political ‘bias’

Meta additionally emphasised mannequin alignment and security by introducing instruments like Llama Guard, Immediate Guard, and CyberSecEval to assist builders detect unsafe enter/output or adversarial prompts, and implementing Generative Offensive Agent Testing (GOAT) for automated red-teaming.

The corporate additionally claims Llama 4 exhibits substantial enchancment on “political bias” and says “particularly, (main LLMs) traditionally have leaned left in terms of debated political and social subjects,” that that Llama 4 does higher at courting the precise wing…in line with Zuckerberg’s embrace of Republican U.S. president Donald J. Trump and his get together following the 2024 election.

The place Llama 4 stands up to now

Meta’s Llama 4 fashions carry collectively effectivity, openness, and high-end efficiency throughout multimodal and reasoning duties.

With Scout and Maverick now publicly accessible and Behemoth previewed as a state-of-the-art trainer mannequin, the Llama ecosystem is positioned to supply a aggressive open various to top-tier proprietary fashions from OpenAI, Anthropic, DeepSeek, and Google.

Whether or not you’re constructing enterprise-scale assistants, AI analysis pipelines, or long-context analytical instruments, Llama 4 provides versatile, high-performance choices with a transparent orientation towards reasoning-first design.

Every day insights on enterprise use instances with VB Every day

If you wish to impress your boss, VB Every day has you coated. We provide the inside scoop on what corporations are doing with generative AI, from regulatory shifts to sensible deployments, so you possibly can share insights for max ROI.

Thanks for subscribing. Take a look at extra VB newsletters right here.

An error occured.