Need smarter insights in your inbox? Join our weekly newsletters to get solely what issues to enterprise AI, knowledge, and safety leaders. Subscribe Now

It’s been a little bit greater than a month since Chinese language AI startup DeepSeek, an offshoot of Hong Kong-based Excessive-Flyer Capital Administration, launched the newest model of its hit open supply mannequin DeepSeek, R1-0528.

Like its predecessor, DeepSeek-R1 — which rocked the AI and international enterprise communities with how cheaply it was skilled and the way properly it carried out on reasoning duties, all obtainable to builders and enterprises free of charge — R1-0528 is already being tailored and remixed by different AI labs and builders, thanks largely to its permissive Apache 2.0 license.

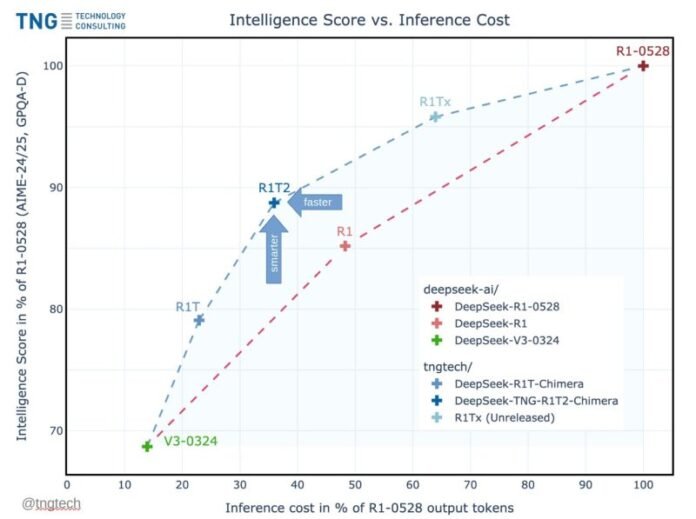

This week, the 24-year-old German agency TNG Know-how Consulting GmbH launched one such adaptation: DeepSeek-TNG R1T2 Chimerathe newest mannequin in its Chimera giant language mannequin (LLM) household. R1T2 delivers a notable enhance in effectivity and pace, scoring at upwards of 90% of R1-0528’s intelligence benchmark scores, whereas producing solutions with lower than 40% of R1-0528’s output token depend.

Meaning it produces shorter responses, translating immediately into sooner inference and decrease compute prices. On the mannequin card TNG launched for its new R1T2 on the AI code sharing neighborhood Hugging Face, the corporate states that it’s “about 20% sooner than the common R1” (the one launched again in January) “and greater than twice as quick as R1-0528” (the Could official replace from DeepSeek).

Already, the response has been extremely constructive from the AI developer neighborhood. “DAMN! DeepSeek R1T2 – 200% sooner than R1-0528 & 20% sooner than R1,” wrote Vaibhav (VB) Srivastav, a senior chief at Hugging Face, on X. “Considerably higher than R1 on GPQA & AIME 24, made by way of Meeting of Specialists with DS V3, R1 & R1-0528 — and it’s MIT-licensed, obtainable on Hugging Face.”

This acquire is made attainable by TNG’s Meeting-of-Specialists (AoE) methodology — a method for constructing LLMs by selectively merging the burden tensors (inner parameters) from a number of pre-trained fashions that TNG described in a paper revealed in Could on arXiv, the non-peer reviewed open entry on-line journal.

A successor to the unique R1T Chimera, R1T2 introduces a brand new “Tri-Thoughts” configuration that integrates three father or mother fashions: DeepSeek-R1-0528, DeepSeek-R1, and DeepSeek-V3-0324. The result’s a mannequin engineered to keep up excessive reasoning functionality whereas considerably decreasing inference value.

R1T2 is constructed with out additional fine-tuning or retraining. It inherits the reasoning energy of R1-0528, the structured thought patterns of R1, and the concise, instruction-oriented habits of V3-0324 — delivering a extra environment friendly, but succesful mannequin for enterprise and analysis use.

How Meeting-of-Specialists (AoE) Differs from Combination-of-Specialists (MoE)

Combination-of-Specialists (MoE) is an architectural design wherein completely different parts, or “consultants,” are conditionally activated per enter. In MoE LLMs like DeepSeek-V3 or Mixtral, solely a subset of the mannequin’s knowledgeable layers (e.g., 8 out of 256) are lively throughout any given token’s ahead move. This enables very giant fashions to realize greater parameter counts and specialization whereas retaining inference prices manageable — as a result of solely a fraction of the community is evaluated per token.

Meeting-of-Specialists (AoE) is a mannequin merging method, not an structure. It’s used to create a brand new mannequin from a number of pre-trained MoE fashions by selectively interpolating their weight tensors.

The “consultants” in AoE seek advice from the mannequin parts being merged — sometimes the routed knowledgeable tensors inside MoE layers — not consultants dynamically activated at runtime.

TNG’s implementation of AoE focuses totally on merging routed knowledgeable tensors — the a part of a mannequin most chargeable for specialised reasoning — whereas typically retaining the extra environment friendly shared and a focus layers from sooner fashions like V3-0324. This strategy permits the ensuing Chimera fashions to inherit reasoning energy with out replicating the verbosity or latency of the strongest father or mother fashions.

Efficiency and Pace: What the Benchmarks Truly Present

Based on benchmark comparisons introduced by TNG, R1T2 achieves between 90% and 92% of the reasoning efficiency of its most clever father or mother, DeepSeek-R1-0528, as measured by AIME-24, AIME-25, and GPQA-Diamond check units.

Nevertheless, in contrast to DeepSeek-R1-0528 — which tends to provide lengthy, detailed solutions as a consequence of its prolonged chain-of-thought reasoning — R1T2 is designed to be rather more concise. It delivers equally clever responses whereas utilizing considerably fewer phrases.

Moderately than specializing in uncooked processing time or tokens-per-second, TNG measures “pace” by way of output token depend per reply — a sensible proxy for each value and latency. Based on benchmarks shared by TNG, R1T2 generates responses utilizing roughly 40% of the tokens required by R1-0528.

That interprets to a 60% discount in output size, which immediately reduces inference time and compute load, rushing up responses by 2X, or 200%.

When in comparison with the unique DeepSeek-R1, R1T2 can be round 20% extra concise on common, providing significant features in effectivity for high-throughput or cost-sensitive deployments.

This effectivity doesn’t come at the price of intelligence. As proven within the benchmark chart introduced in TNG’s technical paper, R1T2 sits in a fascinating zone on the intelligence vs. output value curve. It preserves reasoning high quality whereas minimizing verbosity — an final result vital to enterprise purposes the place inference pace, throughput, and price all matter.

Deployment Concerns and Availability

R1T2 is launched below a permissive MIT License and is accessible now on Hugging Face, that means it’s open supply and obtainable for use and constructed into industrial purposes.

TNG notes that whereas the mannequin is well-suited for normal reasoning duties, it isn’t presently advisable to be used instances requiring perform calling or instrument use, as a consequence of limitations inherited from its DeepSeek-R1 lineage. These could also be addressed in future updates.

The corporate additionally advises European customers to evaluate compliance with the EU AI Act, which comes into impact on August 2, 2025.

Enterprises working within the EU ought to evaluation related provisions or think about halting mannequin use after that date if necessities can’t be met.

Nevertheless, U.S. firms working domestically and servicing U.S.-based customers, or these of different nations, should not topic to the phrases of the EU AI Act, which ought to give them appreciable flexibility when utilizing and deploying this free, speedy open supply reasoning mannequin. In the event that they service customers within the E.U., some provisions of the EU Act will nonetheless apply.

TNG has already made prior Chimera variants obtainable by platforms like OpenRouter and Chutes, the place they reportedly processed billions of tokens every day. The discharge of R1T2 represents an extra evolution on this public availability effort.

About TNG Know-how Consulting GmbH

Based in January 2001, TNG Know-how Consulting GmbH is predicated in Bavaria, Germany, and employs over 900 folks, with a excessive focus of PhDs and technical specialists.

The corporate focuses on software program improvement, synthetic intelligence, and DevOps/cloud providers, serving main enterprise shoppers throughout industries akin to telecommunications, insurance coverage, automotive, e-commerce, and logistics.

TNG operates as a values-based consulting partnership. Its distinctive construction, grounded in operational analysis and self-management rules, helps a tradition of technical innovation.

It actively contributes to open-source communities and analysis, as demonstrated by public releases like R1T2 and the publication of its Meeting-of-Specialists methodology.

What It Means for Enterprise Technical Resolution-Makers

For CTOs, AI platform house owners, engineering leads, and IT procurement groups, R1T2 introduces tangible advantages and strategic choices:

Decrease Inference Prices: With fewer output tokens per process, R1T2 reduces GPU time and power consumption, translating immediately into infrastructure financial savings — particularly necessary in high-throughput or real-time environments.

Excessive Reasoning High quality With out Overhead: It preserves a lot of the reasoning energy of top-tier fashions like R1-0528, however with out their long-windedness. That is preferrred for structured duties (math, programming, logic) the place concise solutions are preferable.

Open and Modifiable: The MIT License permits full deployment management and customization, enabling non-public internet hosting, mannequin alignment, or additional coaching inside regulated or air-gapped environments.

Rising Modularity: The AoE strategy suggests a future the place fashions are constructed modularly, permitting enterprises to assemble specialised variants by recombining strengths of present fashions, relatively than retraining from scratch.

Caveats: Enterprises counting on function-calling, instrument use, or superior agent orchestration ought to be aware present limitations, although future Chimera updates could deal with these gaps.

TNG encourages researchers, builders, and enterprise customers to discover the mannequin, check its habits, and supply suggestions. The R1T2 Chimera is accessible at huggingface.co/tngtech/DeepSeek-TNG-R1T2-Chimeraand technical inquiries may be directed to analysis@tngtech.com.

For technical background and benchmark methodology, TNG’s analysis paper is accessible at arXiv:2506.14794.

Day by day insights on enterprise use instances with VB Day by day

If you wish to impress your boss, VB Day by day has you lined. We provide the inside scoop on what firms are doing with generative AI, from regulatory shifts to sensible deployments, so you’ll be able to share insights for max ROI.

Thanks for subscribing. Try extra VB newsletters right here.

An error occured.

I love how you write—it’s like having a conversation with a good friend. Can’t wait to read more!This post pulled me in from the very first sentence. You have such a unique voice!Seriously, every time I think I’ll just skim through, I end up reading every word. Keep it up!Your posts always leave me thinking… and wanting more. This one was no exception!Such a smooth and engaging read—your writing flows effortlessly. Big fan here!Every time I read your work, I feel like I’m right there with you. Beautifully written!You have a real talent for storytelling. I couldn’t stop reading once I started.The way you express your thoughts is so natural and compelling. I’ll definitely be back for more!Wow—your writing is so vivid and alive. It’s hard not to get hooked!You really know how to connect with your readers. Your words resonate long after I finish reading.